ADY - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on ADY Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

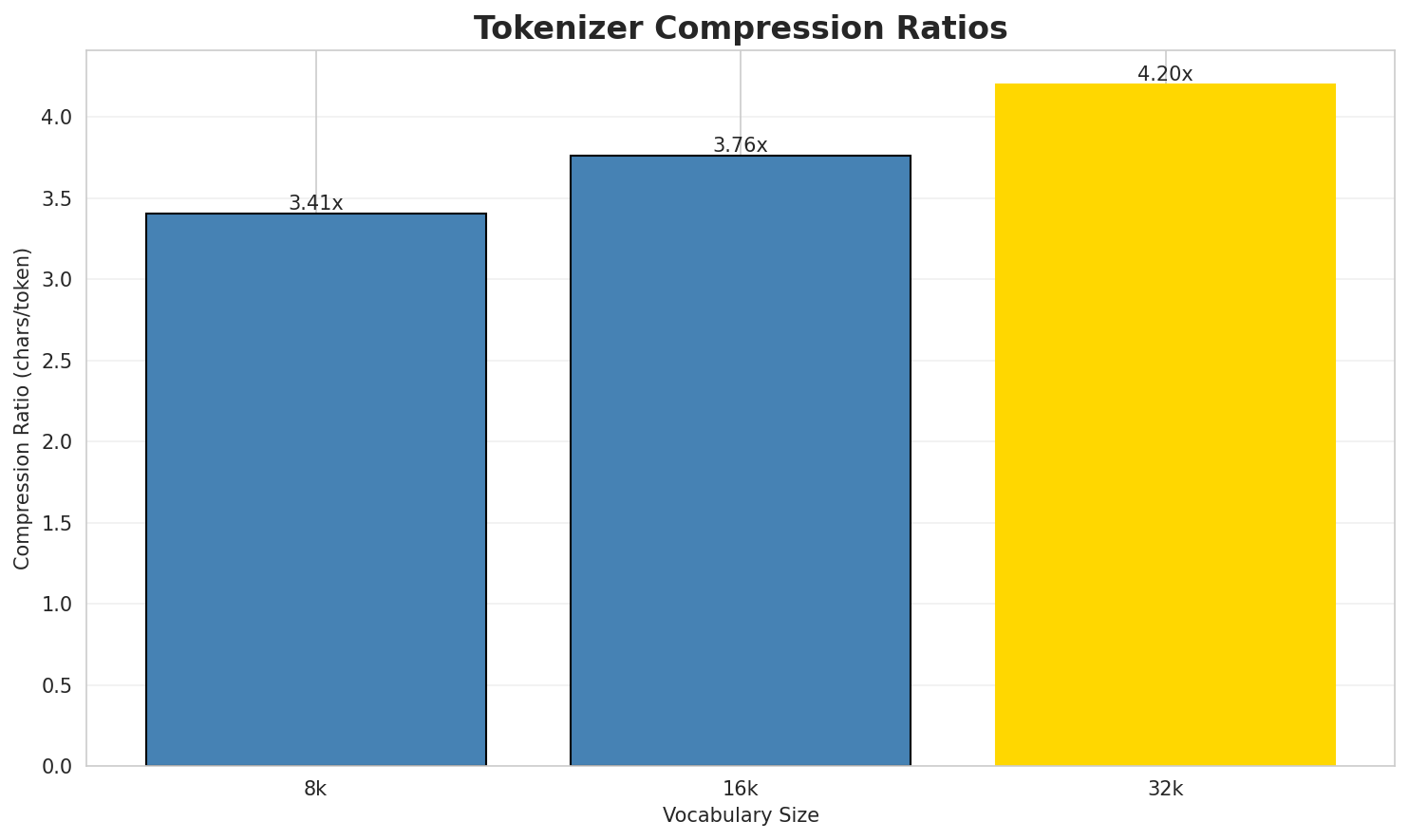

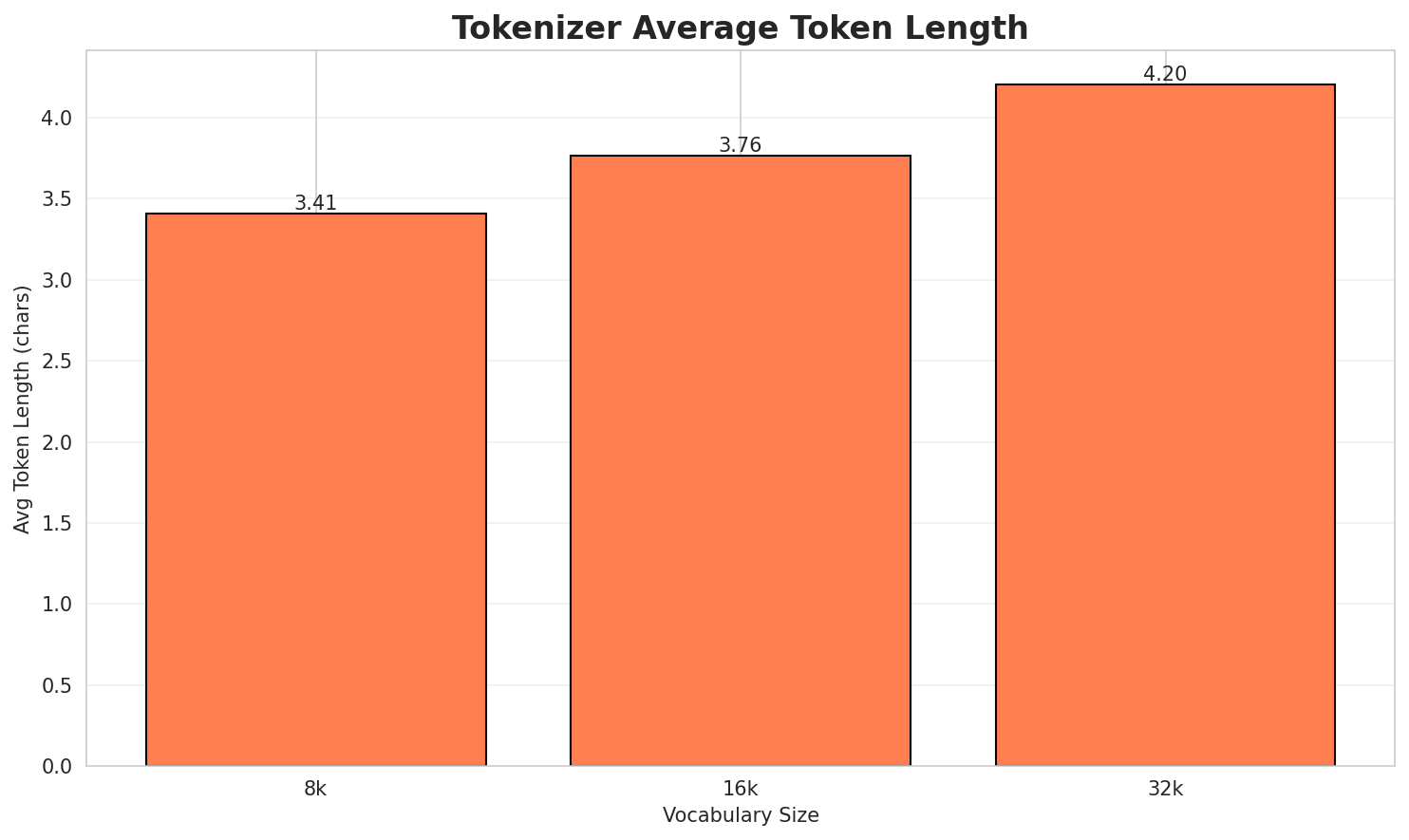

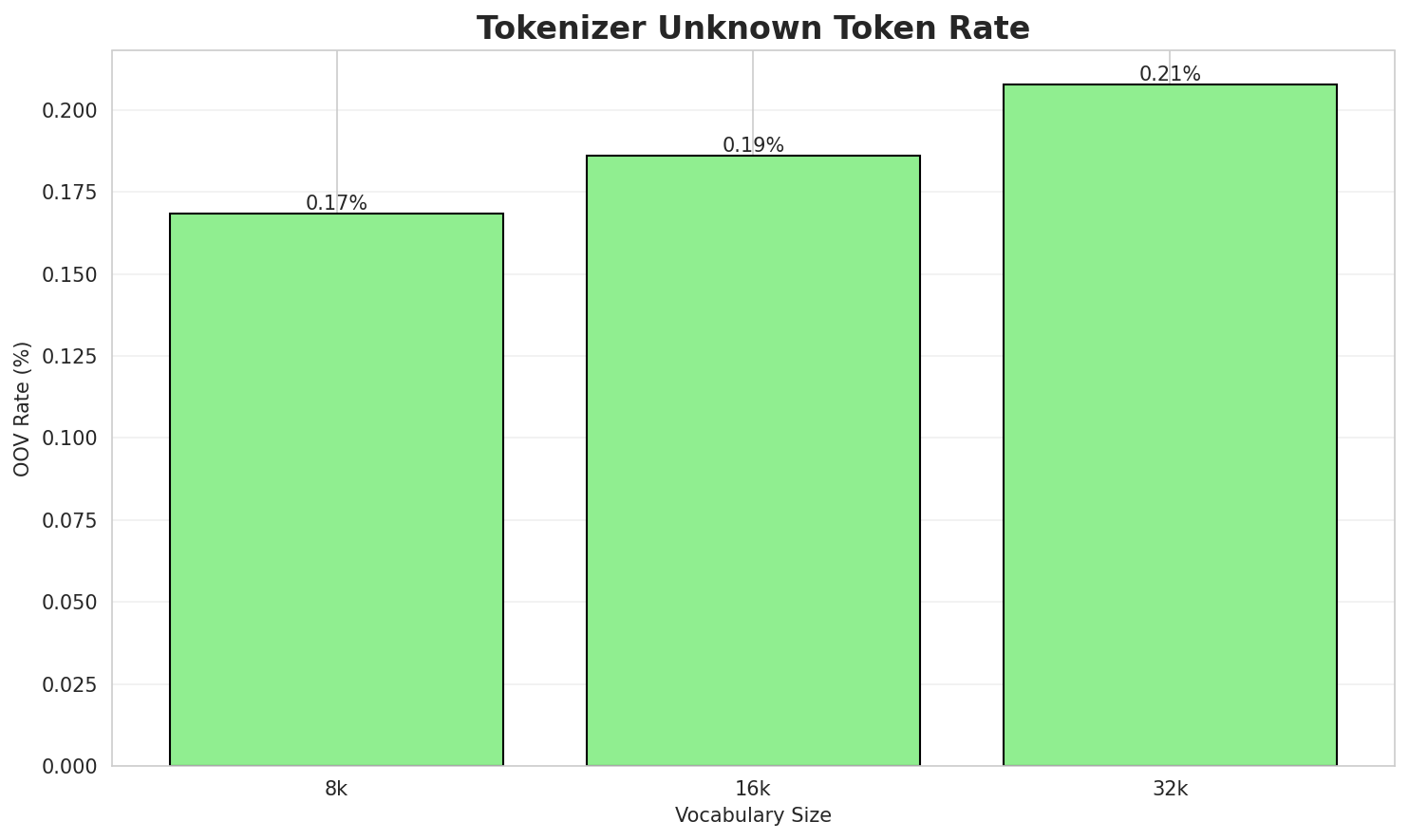

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.442x | 3.45 | 0.1638% | 134,283 |

| 16k | 3.798x | 3.80 | 0.1808% | 121,676 |

| 32k | 4.231x 🏆 | 4.24 | 0.2014% | 109,215 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: Шъхьафит — Ашэ псыхъо иджабгъу нэпкъы тес Адыгэ къуадж. районым хахьэ. Хым зы пэ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁шъхьафит ▁— ▁ашэ ▁псыхъо ▁иджабгъу ▁нэпкъы ▁тес ▁адыгэ ▁къуадж . ... (+7 more) |

17 |

| 16k | ▁шъхьафит ▁— ▁ашэ ▁псыхъо ▁иджабгъу ▁нэпкъы ▁тес ▁адыгэ ▁къуадж . ... (+7 more) |

17 |

| 32k | ▁шъхьафит ▁— ▁ашэ ▁псыхъо ▁иджабгъу ▁нэпкъы ▁тес ▁адыгэ ▁къуадж . ... (+7 more) |

17 |

Sample 2: thumb Америкэ - чӀынэлъэшхухэр Iут зэхэт (Къыблэ Америкэмрэ, Ишъхъэрэмрэ) Тыгъэк...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁thumb ▁америкэ ▁- ▁чӏы нэлъэ шхухэр ▁i ут ▁зэхэт ▁( ... (+17 more) |

27 |

| 16k | ▁thumb ▁америкэ ▁- ▁чӏынэлъэшхухэр ▁i ут ▁зэхэт ▁( къыблэ ▁америкэмрэ ... (+13 more) |

23 |

| 32k | ▁thumb ▁америкэ ▁- ▁чӏынэлъэшхухэр ▁i ут ▁зэхэт ▁( къыблэ ▁америкэмрэ ... (+11 more) |

21 |

Sample 3: thumb Мамуныр мэз псэушъхьэхэмэ а щыщ. Мамунхэр чыг дэпшэиэным лъэшэу Мамуным и ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁thumb ▁мамун ыр ▁мэз ▁псэушъхьэхэмэ ▁а ▁щыщ . ▁мамун хэр ... (+22 more) |

32 |

| 16k | ▁thumb ▁мамуныр ▁мэз ▁псэушъхьэхэмэ ▁а ▁щыщ . ▁мамунхэр ▁ч ыг ... (+14 more) |

24 |

| 32k | ▁thumb ▁мамуныр ▁мэз ▁псэушъхьэхэмэ ▁а ▁щыщ . ▁мамунхэр ▁чыг ▁дэпшэиэным ... (+10 more) |

20 |

Key Findings

- Best Compression: 32k achieves 4.231x compression

- Lowest UNK Rate: 8k with 0.1638% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

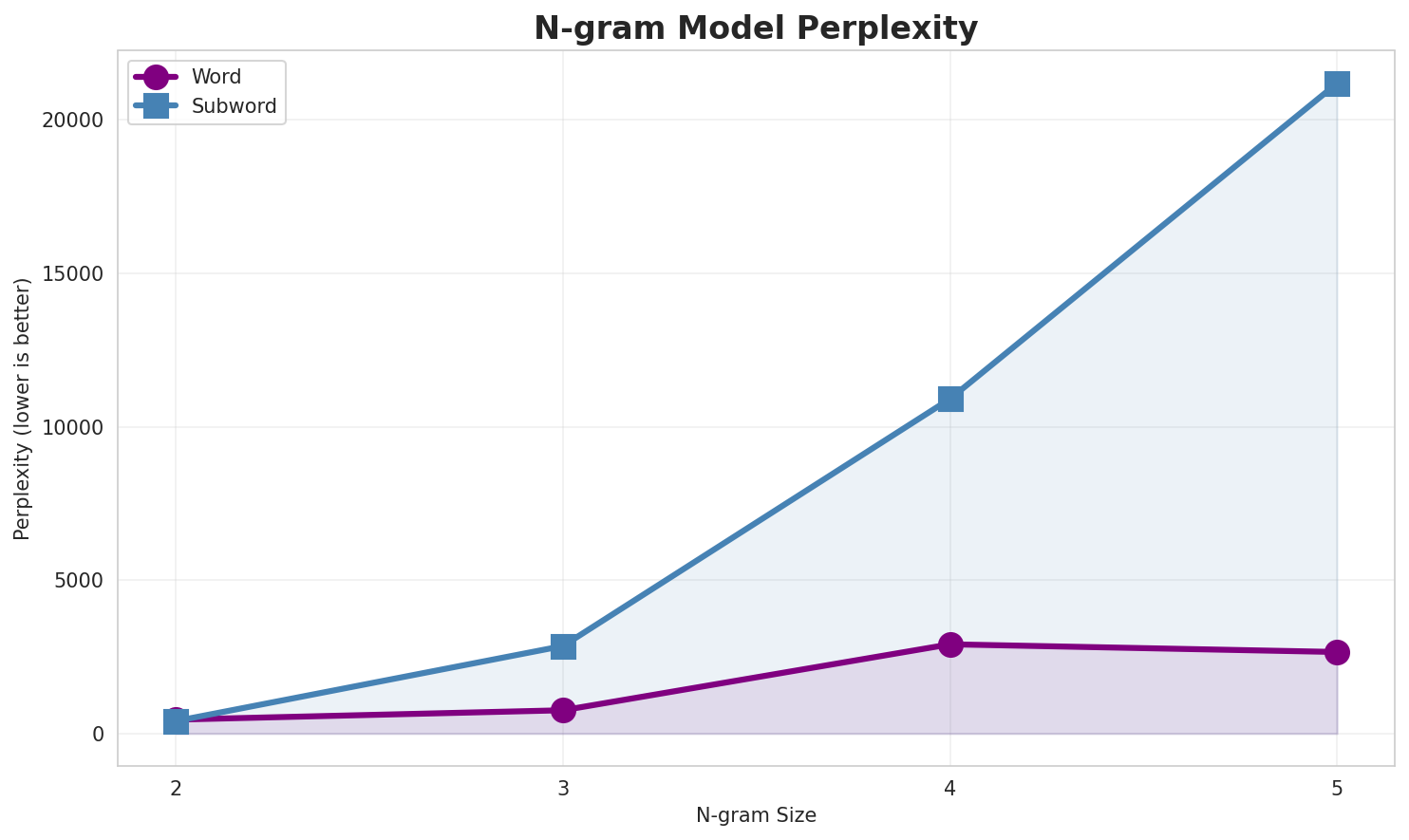

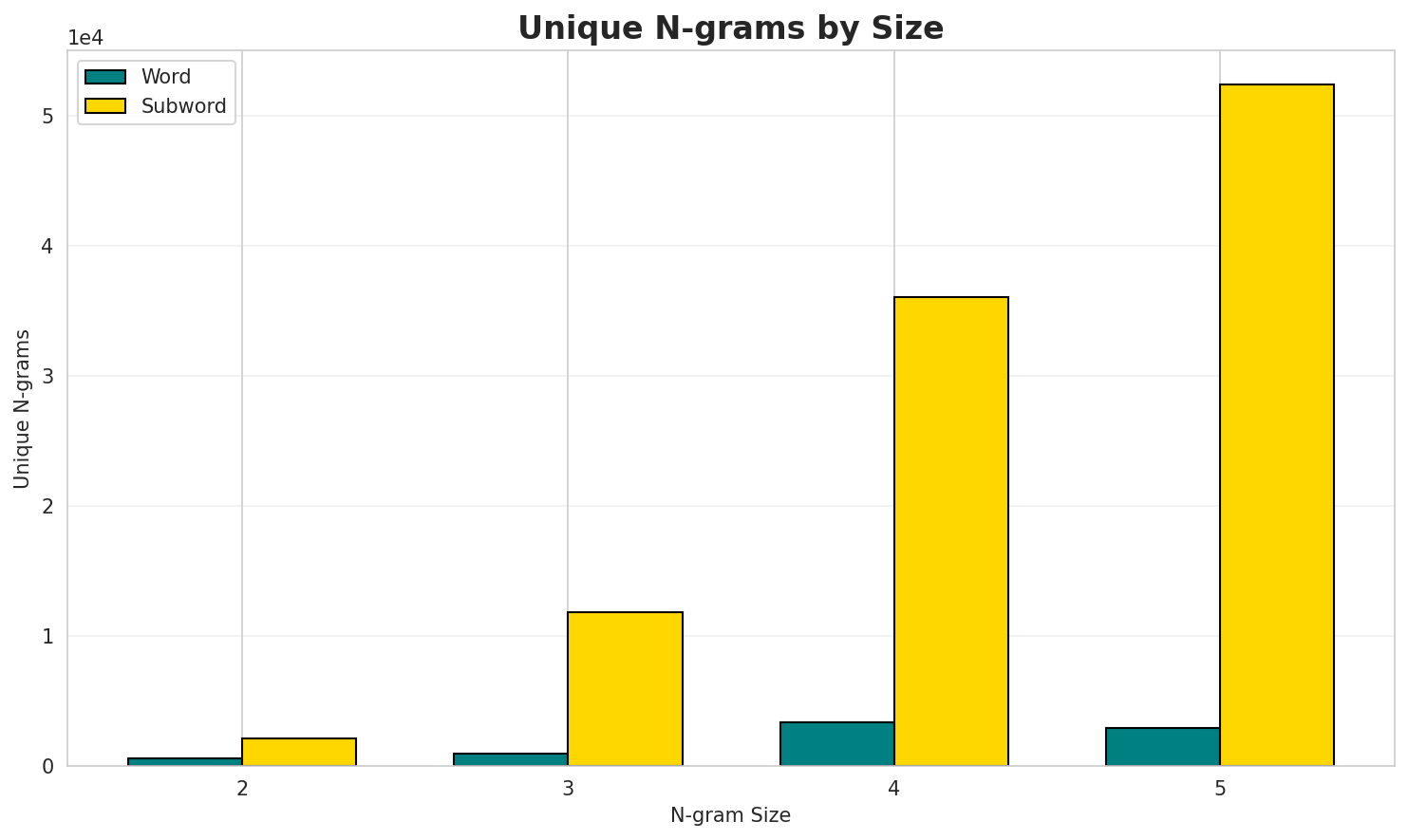

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 418 | 8.71 | 593 | 45.3% | 100.0% |

| 2-gram | Subword | 399 🏆 | 8.64 | 2,072 | 57.0% | 97.4% |

| 3-gram | Word | 706 | 9.46 | 922 | 33.9% | 100.0% |

| 3-gram | Subword | 2,788 | 11.44 | 11,614 | 24.5% | 65.1% |

| 4-gram | Word | 2,848 | 11.48 | 3,264 | 13.1% | 44.3% |

| 4-gram | Subword | 10,651 | 13.38 | 35,316 | 12.4% | 39.6% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | нэбгырэ млн |

169 |

| 2 | къехъу щэпсэу |

104 |

| 3 | картым тетэу |

100 |

| 4 | м къехъу |

89 |

| 5 | дло м |

87 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | м къехъу щэпсэу |

76 |

| 2 | къехъу щэпсэу хэгэгум |

70 |

| 3 | адыгэ республикэм и |

48 |

| 4 | дло м хахьэ |

44 |

| 5 | м хахьэ хэгъэгу |

39 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | м къехъу щэпсэу хэгэгум |

45 |

| 2 | дло м хахьэ хэгъэгу |

39 |

| 3 | еуропэм хэт къэралыгъу къэлэ |

23 |

| 4 | америкэм ит къэралыгъу къэлэ |

19 |

| 5 | азием ит къэралыгъу къэлэ |

18 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | г ъ |

9,349 |

| 2 | ъ э |

9,255 |

| 3 | э _ |

8,719 |

| 4 | м _ |

7,823 |

| 5 | э р |

6,778 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | г ъ э |

4,967 |

| 2 | _ к ъ |

4,149 |

| 3 | э м _ |

3,582 |

| 4 | ы г ъ |

3,357 |

| 5 | э р _ |

3,016 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ы г ъ э |

1,903 |

| 2 | х э р _ |

1,450 |

| 3 | а г ъ э |

1,351 |

| 4 | х э м _ |

1,305 |

| 5 | _ к ъ э |

1,289 |

Key Findings

- Best Perplexity: 2-gram (subword) with 399

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~40% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

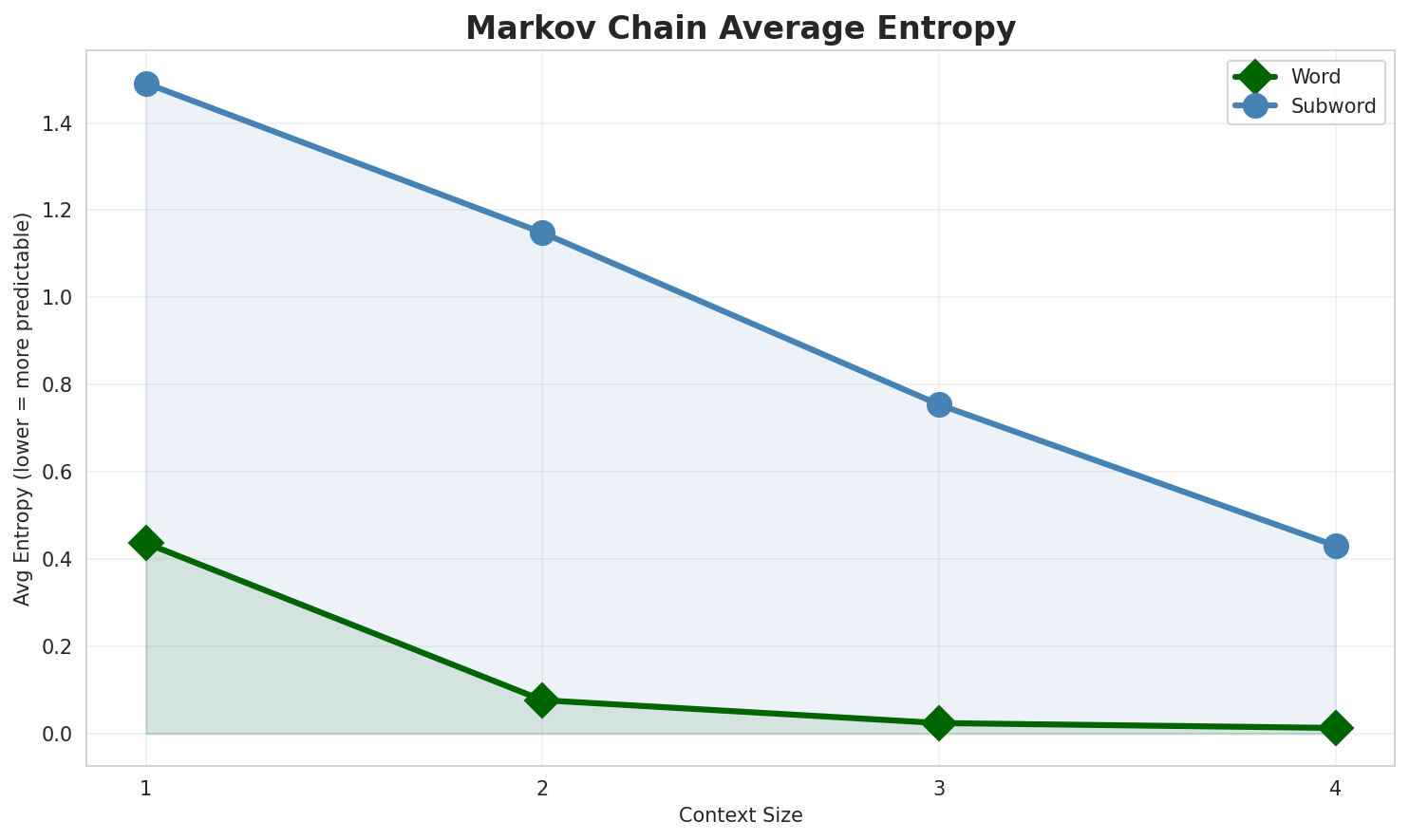

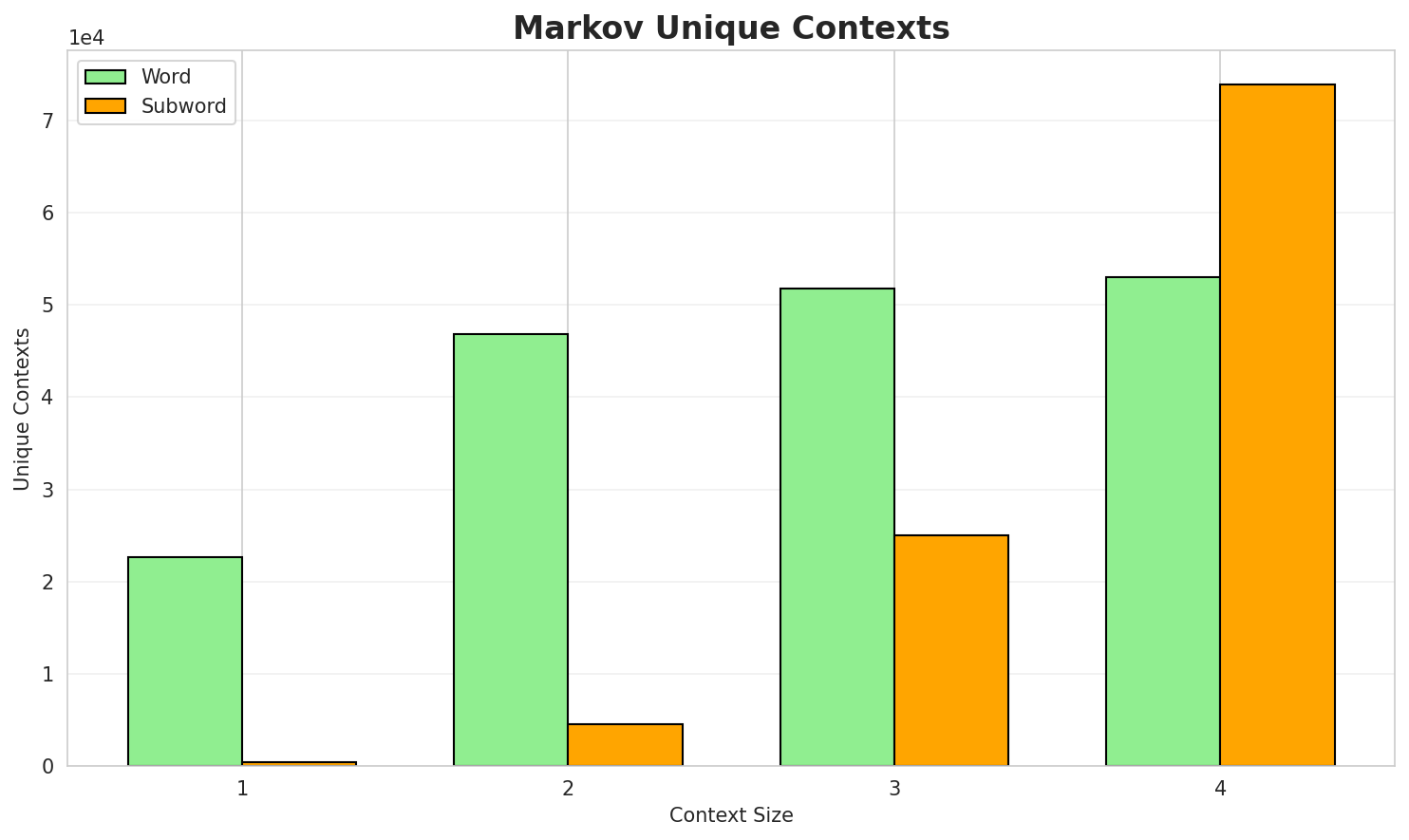

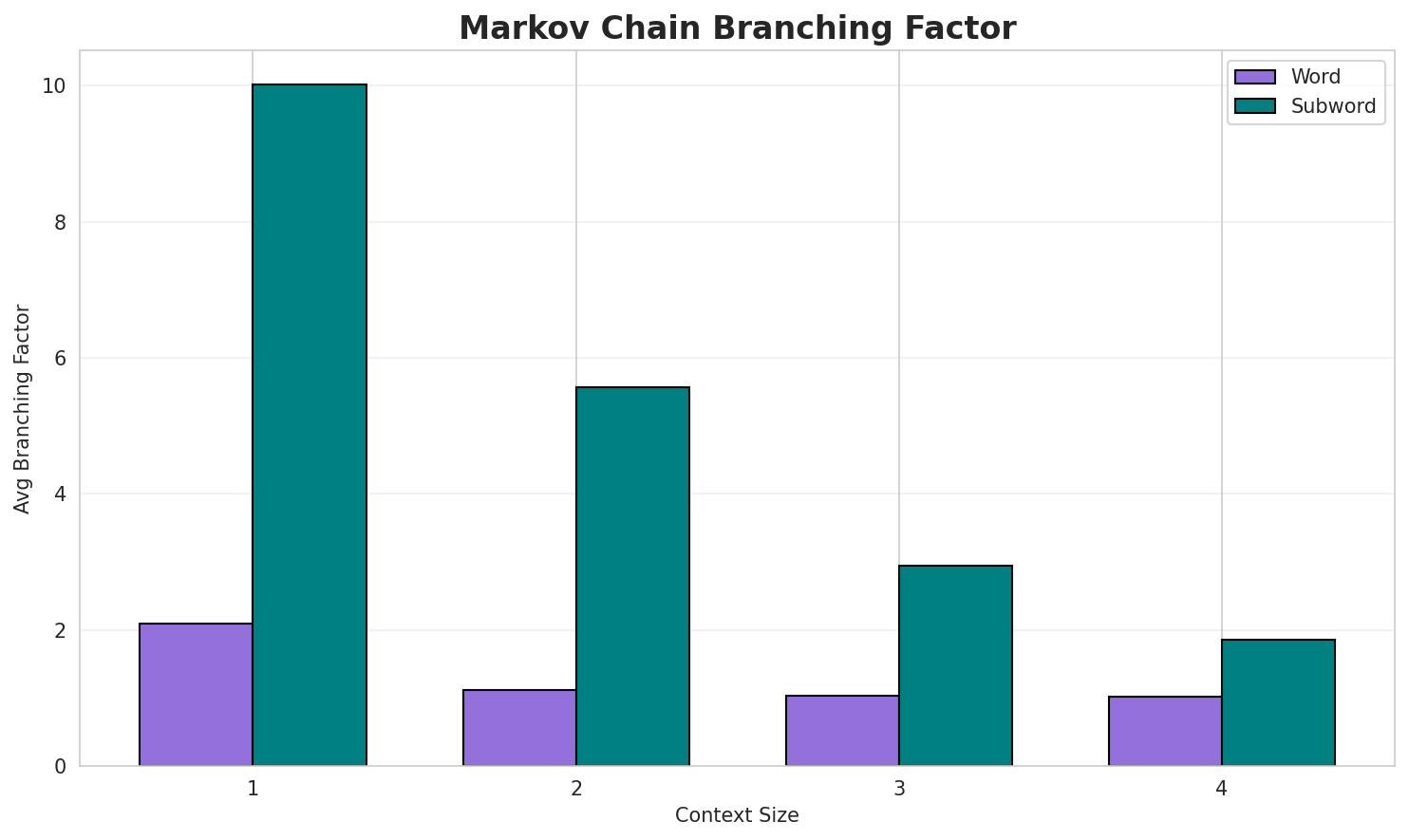

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.4365 | 1.353 | 2.10 | 22,306 | 56.3% |

| 1 | Subword | 1.4909 | 2.811 | 10.56 | 410 | 0.0% |

| 2 | Word | 0.0764 | 1.054 | 1.12 | 46,305 | 92.4% |

| 2 | Subword | 1.1481 | 2.216 | 5.61 | 4,325 | 0.0% |

| 3 | Word | 0.0240 | 1.017 | 1.03 | 51,243 | 97.6% |

| 3 | Subword | 0.7541 | 1.687 | 2.97 | 24,260 | 24.6% |

| 4 | Word | 0.0128 🏆 | 1.009 | 1.02 | 52,387 | 98.7% |

| 4 | Subword | 0.4304 | 1.348 | 1.86 | 72,077 | 57.0% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

и 13 мэ ащыщэу адыгэр сыдигъокіи адыгэ къуаж ипшъэ итхьапӏэ иблэгъожъхэм афэгъэхьыгъэ мифхэр къызэра...адыгэ хьатыкъуай унагъохэр тыркуем и плакат ныбэрынхьэблэ адыгэбзэ жэбзэ къабзэ ежь ныпым зызиушъомб...м ахахьэ хэгъэгу шавкат мирзияев къэрал лӏышъхьэр кӏокӏо къызбэч кавказ заом ыпэкӏэ щыӏагъэхэмрэ якъ...

Context Size 2:

нэбгырэ млн 10 фэдиз тешӏагъэу анатолием ахэр агъэкощыгъэх тхыгъэ зэфэшъхьафхэм мэхьанэу каноничност...къехъу щэпсэу я 84 хэгэгум 93 030 км я 26 испаныбзэр ащ нэмыкӏэу регионыбзэхэр иӏэх дло мкартым тетэу бразилие къыблэ америкэм ыгу ит германиер аустриер словакиер руманиер украинэр сербиер ...

Context Size 3:

м къехъу щэпсэу хэгэгум 2 149 690 км арапыбз сауд арабиер арап къэралыгъомэ ащыщмэ анахь хэгъэгу ащы...къехъу щэпсэу хэгэгум 140 800 км непали дло м хахьэ хэгъэгу хассанал болкиах географие азием и гъунэ...адыгэ республикэм и къэралыгъо премие илауреат дунэе адыгэ академием иакадемик къалэу шъачэ поселкэу...

Context Size 4:

м къехъу щэпсэу хэгэгум 9 596 960 км китаибзэр дло м хахьэ хэгъэгу эмомали рахмон къэрал тхьэматэр к...дло м хахьэ хэгъэгу джоко видодо гуадзэр юсуф калла географие океан шъэфымымрэ инд океанымрэ азфагу ...еуропэм хэт къэралыгъу къэлэ париж нэбгырэ млн 66 м къехъу щэпсэу хэгэгум 9 984 670 км я 2 англыбзэ

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_фим_хъэрикъоламэм_илъу_-м_бэхь_ышъэпсым_илнине_

Context Size 2:

гъэгъэ_асэу_ɡʲadəъэхьэухэм_епхъухьэ_хэгьэмрэ_щыпӏэ-

Context Size 3:

гъэ_уахэмрэ,_къыуи_къалэбилэжъ_зэпхъэм_ыгугъэкон_къаук

Context Size 4:

ыгъэуцохэр_чэзыу-чэхэр_нэхъин_динхэр_загъэр_гъэп,_англыбз

Key Findings

- Best Predictability: Context-4 (word) with 98.7% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (72,077 contexts)

- Recommendation: Context-3 or Context-4 for text generation

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 7,032 |

| Total Tokens | 44,503 |

| Mean Frequency | 6.33 |

| Median Frequency | 3 |

| Frequency Std Dev | 22.13 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | и | 1,013 |

| 2 | адыгэ | 666 |

| 3 | м | 489 |

| 4 | илъэсым | 398 |

| 5 | ащ | 391 |

| 6 | я | 309 |

| 7 | ары | 271 |

| 8 | нэбгырэ | 247 |

| 9 | а | 243 |

| 10 | ыкӏи | 211 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | рсфср | 2 |

| 2 | серийнэ | 2 |

| 3 | ныбжьыкӏэхэри | 2 |

| 4 | зэратебэнагъэр | 2 |

| 5 | хираганэ | 2 |

| 6 | катаканэ | 2 |

| 7 | сербыбзэм | 2 |

| 8 | къыздикӏыгъэр | 2 |

| 9 | тыванбзэ | 2 |

| 10 | къызыл | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 0.7821 |

| R² (Goodness of Fit) | 0.977951 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 29.3% |

| Top 1,000 | 60.6% |

| Top 5,000 | 90.9% |

| Top 10,000 | 0.0% |

Key Findings

- Zipf Compliance: R²=0.9780 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 29.3% of corpus

- Long Tail: -2,968 words needed for remaining 100.0% coverage

5. Word Embeddings Evaluation

5.1 Cross-Lingual Alignment

Note: Multilingual alignment visualization not available for this language.

5.2 Model Comparison

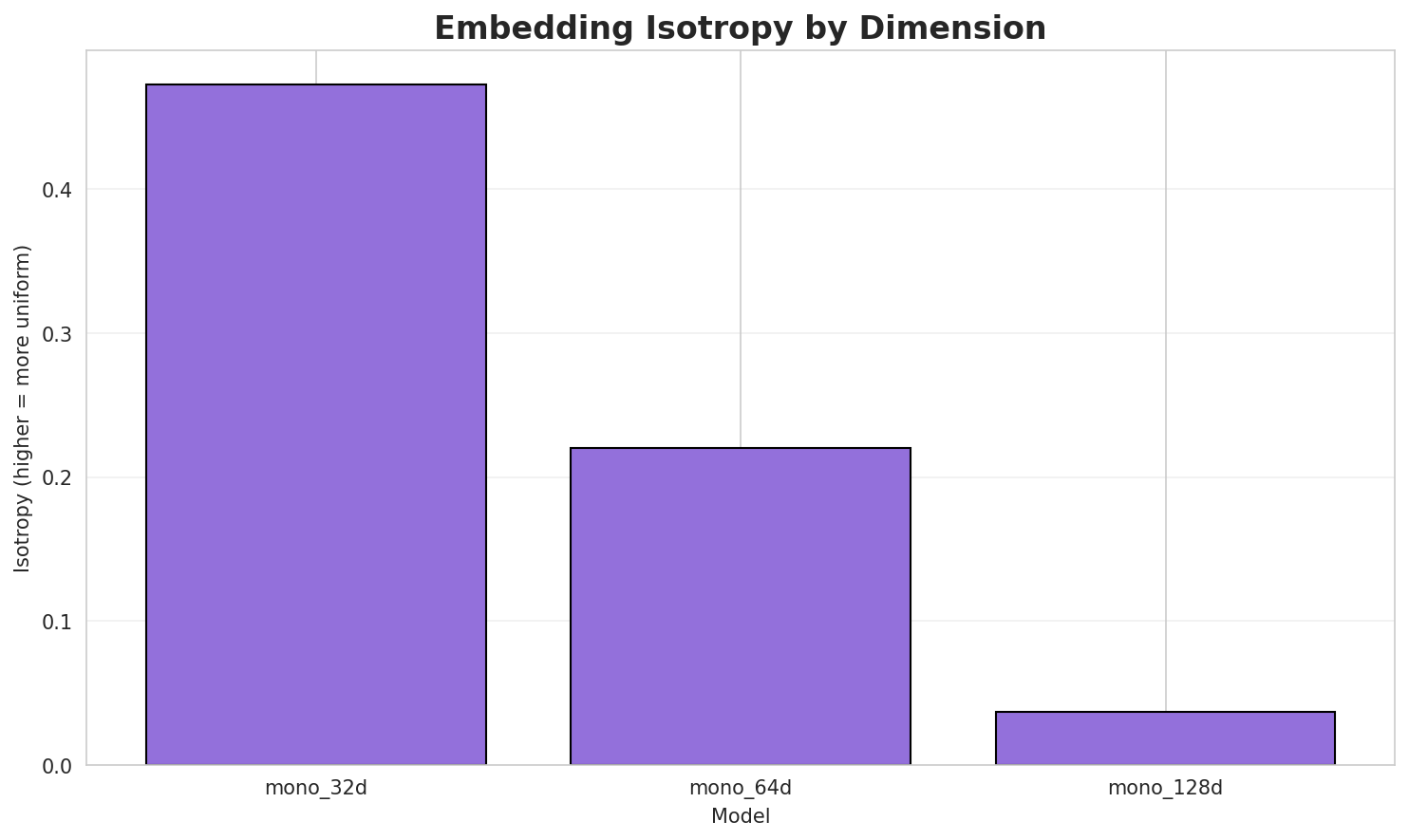

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.4730 🏆 | 0.4239 | N/A | N/A |

| mono_64d | 64 | 0.2201 | 0.4040 | N/A | N/A |

| mono_128d | 128 | 0.0372 | 0.3952 | N/A | N/A |

Key Findings

- Best Isotropy: mono_32d with 0.4730 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.4077. Lower values indicate better semantic separation.

- Alignment Quality: No aligned models evaluated in this run.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

⚠️ Warning: This language shows low morphological productivity. The statistical signals used for this analysis may be noisy or less reliable than for morphologically rich languages.

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 0.000 | Low morphological productivity | ⚠️ Likely unreliable |

| Idiomaticity Gap | -1.000 | Low formulaic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-къ |

къыщыхъу, къуаджэхэу, къэбарым |

-зэ |

зэман, зэдаштэгъэ, зэпэух |

-къы |

къыщыхъу, къыщыфэфедэщтхэу, къызыхэкӏыгъэр |

Productive Suffixes

| Suffix | Examples |

|---|---|

-э |

ятхьэ, урысыбзэ, чылэ |

-м |

такъырым, шапхъэхэм, къэбарым |

-р |

латвиер, сыхьатыр, министр |

-эр |

курдхэр, щыгъынхэр, мэхъошхэр |

-эм |

шапхъэхэм, япэм, урымыбзэм |

-эу |

алфавитэу, илъхэу, игъэкӏотыгъэу |

-хэр |

курдхэр, щыгъынхэр, мэхъошхэр |

-рэ |

къагъэлъагъуэрэ, зыгорэ, цӏэмрэ |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

тыгъ |

1.78x | 28 contexts | тыгъэ, итыгъ, тыгъу |

ъагъ |

2.15x | 14 contexts | пчъагъ, лъагъо, пчъагъэ |

агъэ |

1.54x | 41 contexts | тхагъэ, благъэ, пчагъэ |

эпкъ |

1.74x | 25 contexts | нэпкъ, тхэпкъ, лъэпкъ |

къуа |

2.16x | 10 contexts | къуае, къуажэ, къуадж |

ъхьэ |

1.78x | 16 contexts | шъхьэ, пшъхьэ, шъхьэм |

дыгэ |

1.82x | 14 contexts | адыгэ, адыгэм, иадыгэ |

эхэр |

1.56x | 21 contexts | бэхэр, усэхэр, ынэхэр |

шъхь |

1.49x | 24 contexts | шъхьэ, пшъхьэ, шъхьэм |

псэу |

1.57x | 19 contexts | щыпсэу, щэпсэу, сыпсэу |

ыгъо |

1.56x | 19 contexts | цыгъо, мыгъо, пщыгъо |

гъэх |

1.65x | 14 contexts | багъэх, хъугъэх, ежагъэх |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-къ |

-э |

96 words | къэлэмымкӏэ, къалэмэ |

-къ |

-р |

64 words | къор, къуаджэхэр |

-къ |

-м |

56 words | къалэм, къумбылым |

-къ |

-эр |

52 words | къуаджэхэр, къэбархэр |

-зэ |

-р |

42 words | зэготхэр, зэхэтхэр |

-зэ |

-м |

41 words | зэхэзгъэуцуагъэхэм, зэӏукӏэгъум |

-къ |

-эм |

36 words | къалэм, къуаджэхэм |

-зэ |

-эр |

34 words | зэготхэр, зэхэтхэр |

-къ |

-эу |

34 words | къыхэкӏыгъэу, къэгъэлъэгъонэу |

-зэ |

-э |

31 words | зэ, зэригъэфэгъэ |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| щыпсэухэрэр | щыпс-эу-хэр-эр |

7.5 | щыпс |

| америкэмрэ | америк-эм-рэ |

6.0 | америк |

| океанымрэ | океан-ым-рэ |

6.0 | океан |

| литературэмрэ | литератур-эм-рэ |

6.0 | литератур |

| бзылъфыгъэмрэ | бзылъфыгъ-эм-рэ |

6.0 | бзылъфыгъ |

| адыгабзэмрэ | адыгабз-эм-рэ |

6.0 | адыгабз |

| хыплъыжьымрэ | хыплъыжь-ым-рэ |

6.0 | хыплъыжь |

| алфавитэу | алфавит-эу |

4.5 | алфавит |

| цӏыкӏухэр | цӏыкӏу-хэр |

4.5 | цӏыкӏу |

| исурэтхэр | исурэт-хэр |

4.5 | исурэт |

| шӏыпӏэхэр | шӏыпӏэ-хэр |

4.5 | шӏыпӏэ |

| шӏэныгъэм | шӏэныгъ-эм |

4.5 | шӏэныгъ |

| къыпыщылъ | къы-пыщылъ |

4.5 | пыщылъ |

| пэблагъэу | пэблагъ-эу |

4.5 | пэблагъ |

| ишъхъэрэмрэ | ишъхъ-эр-эм-рэ |

4.5 | ишъхъ |

6.6 Linguistic Interpretation

Automated Insight: The language ADY appears to be more isolating or has a highly fixed vocabulary. Word-level models perform nearly as well as subword models, indicating fewer productive morphological processes.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 32k BPE | Best compression (4.23x) |

| N-gram | 2-gram | Lowest perplexity (399) |

| Markov | Context-4 | Highest predictability (98.7%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-03 05:00:02