Eugene Siow

commited on

Commit

·

bffd03a

1

Parent(s):

cca5219

Initial commit.

Browse files- README.md +142 -0

- config.json +10 -0

- images/pan_2_4_compare.png +0 -0

- images/pan_4_4_compare.png +0 -0

- pytorch_model_2x.pt +3 -0

- pytorch_model_4x.pt +3 -0

README.md

ADDED

|

@@ -0,0 +1,142 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

tags:

|

| 4 |

+

- super-image

|

| 5 |

+

- image-super-resolution

|

| 6 |

+

datasets:

|

| 7 |

+

- eugenesiow/Div2k

|

| 8 |

+

- eugenesiow/Set5

|

| 9 |

+

- eugenesiow/Set14

|

| 10 |

+

- eugenesiow/BSD100

|

| 11 |

+

- eugenesiow/Urban100

|

| 12 |

+

metrics:

|

| 13 |

+

- pnsr

|

| 14 |

+

- ssim

|

| 15 |

+

---

|

| 16 |

+

# Pixel Attention Network (PAN)

|

| 17 |

+

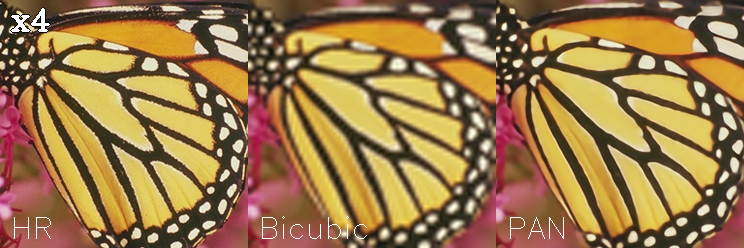

PAN model pre-trained on DIV2K (800 images training, augmented to 4000 images, 100 images validation) for 2x, 3x and 4x image super resolution. It was introduced in the paper [Efficient Image Super-Resolution Using Pixel Attention](https://arxiv.org/abs/2010.01073) by Zhao et al. (2020) and first released in [this repository](https://github.com/zhaohengyuan1/PAN).

|

| 18 |

+

|

| 19 |

+

The goal of image super resolution is to restore a high resolution (HR) image from a single low resolution (LR) image. The image below shows the ground truth (HR), the bicubic upscaling and model upscaling.

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

## Model description

|

| 23 |

+

The PAN model proposes a a lightweight convolutional neural network for image super resolution. Pixel attention (PA) is similar to channel attention and spatial attention in formulation. PA however produces 3D attention maps instead of a 1D attention vector or a 2D map. This attention scheme introduces fewer additional parameters but generates better SR results.

|

| 24 |

+

|

| 25 |

+

The model is very lightweight with the model being just 260k to 270k parameters (~1mb).

|

| 26 |

+

## Intended uses & limitations

|

| 27 |

+

You can use the pre-trained models for upscaling your images 2x, 3x and 4x. You can also use the trainer to train a model on your own dataset.

|

| 28 |

+

### How to use

|

| 29 |

+

The model can be used with the [super_image](https://github.com/eugenesiow/super-image) library:

|

| 30 |

+

```bash

|

| 31 |

+

pip install super-image

|

| 32 |

+

```

|

| 33 |

+

Here is how to use a pre-trained model to upscale your image:

|

| 34 |

+

```python

|

| 35 |

+

from super_image import PanModel, ImageLoader

|

| 36 |

+

from PIL import Image

|

| 37 |

+

import requests

|

| 38 |

+

|

| 39 |

+

url = 'https://paperswithcode.com/media/datasets/Set5-0000002728-07a9793f_zA3bDjj.jpg'

|

| 40 |

+

image = Image.open(requests.get(url, stream=True).raw)

|

| 41 |

+

|

| 42 |

+

model = PanModel.from_pretrained('eugenesiow/pan', scale=2) # scale 2, 3 and 4 models available

|

| 43 |

+

inputs = ImageLoader.load_image(image)

|

| 44 |

+

preds = model(inputs)

|

| 45 |

+

|

| 46 |

+

ImageLoader.save_image(preds, './scaled_2x.png') # save the output 2x scaled image to `./scaled_2x.png`

|

| 47 |

+

ImageLoader.save_compare(inputs, preds, './scaled_2x_compare.png') # save an output comparing the super-image with a bicubic scaling

|

| 48 |

+

```

|

| 49 |

+

[](https://colab.research.google.com/github/eugenesiow/super-image-notebooks/blob/master/notebooks/Upscale_Images_with_Pretrained_super_image_Models.ipynb "Open in Colab")

|

| 50 |

+

## Training data

|

| 51 |

+

The models for 2x, 3x and 4x image super resolution were pretrained on [DIV2K](https://huggingface.co/datasets/eugenesiow/Div2k), a dataset of 800 high-quality (2K resolution) images for training, augmented to 4000 images and uses a dev set of 100 validation images (images numbered 801 to 900).

|

| 52 |

+

## Training procedure

|

| 53 |

+

### Preprocessing

|

| 54 |

+

We follow the pre-processing and training method of [Wang et al.](https://arxiv.org/abs/2104.07566).

|

| 55 |

+

Low Resolution (LR) images are created by using bicubic interpolation as the resizing method to reduce the size of the High Resolution (HR) images by x2, x3 and x4 times.

|

| 56 |

+

During training, RGB patches with size of 64×64 from the LR input are used together with their corresponding HR patches.

|

| 57 |

+

Data augmentation is applied to the training set in the pre-processing stage where five images are created from the four corners and center of the original image.

|

| 58 |

+

|

| 59 |

+

We need the huggingface [datasets](https://huggingface.co/datasets?filter=task_ids:other-other-image-super-resolution) library to download the data:

|

| 60 |

+

```bash

|

| 61 |

+

pip install datasets

|

| 62 |

+

```

|

| 63 |

+

The following code gets the data and preprocesses/augments the data.

|

| 64 |

+

|

| 65 |

+

```python

|

| 66 |

+

from datasets import load_dataset

|

| 67 |

+

from super_image.data import EvalDataset, TrainDataset, augment_five_crop

|

| 68 |

+

|

| 69 |

+

augmented_dataset = load_dataset('eugenesiow/Div2k', 'bicubic_x4', split='train')\

|

| 70 |

+

.map(augment_five_crop, batched=True, desc="Augmenting Dataset") # download and augment the data with the five_crop method

|

| 71 |

+

train_dataset = TrainDataset(augmented_dataset) # prepare the train dataset for loading PyTorch DataLoader

|

| 72 |

+

eval_dataset = EvalDataset(load_dataset('eugenesiow/Div2k', 'bicubic_x4', split='validation')) # prepare the eval dataset for the PyTorch DataLoader

|

| 73 |

+

```

|

| 74 |

+

### Pretraining

|

| 75 |

+

The model was trained on GPU. The training code is provided below:

|

| 76 |

+

```python

|

| 77 |

+

from super_image import Trainer, TrainingArguments, PanModel, PanConfig

|

| 78 |

+

|

| 79 |

+

training_args = TrainingArguments(

|

| 80 |

+

output_dir='./results', # output directory

|

| 81 |

+

num_train_epochs=1000, # total number of training epochs

|

| 82 |

+

)

|

| 83 |

+

|

| 84 |

+

config = PanConfig(

|

| 85 |

+

scale=4, # train a model to upscale 4x

|

| 86 |

+

)

|

| 87 |

+

model = PanModel(config)

|

| 88 |

+

|

| 89 |

+

trainer = Trainer(

|

| 90 |

+

model=model, # the instantiated model to be trained

|

| 91 |

+

args=training_args, # training arguments, defined above

|

| 92 |

+

train_dataset=train_dataset, # training dataset

|

| 93 |

+

eval_dataset=eval_dataset # evaluation dataset

|

| 94 |

+

)

|

| 95 |

+

|

| 96 |

+

trainer.train()

|

| 97 |

+

```

|

| 98 |

+

|

| 99 |

+

[](https://colab.research.google.com/github/eugenesiow/super-image-notebooks/blob/master/notebooks/Train_super_image_Models.ipynb "Open in Colab")

|

| 100 |

+

## Evaluation results

|

| 101 |

+

The evaluation metrics include [PSNR](https://en.wikipedia.org/wiki/Peak_signal-to-noise_ratio#Quality_estimation_with_PSNR) and [SSIM](https://en.wikipedia.org/wiki/Structural_similarity#Algorithm).

|

| 102 |

+

|

| 103 |

+

Evaluation datasets include:

|

| 104 |

+

- Set5 - [Bevilacqua et al. (2012)](https://huggingface.co/datasets/eugenesiow/Set5)

|

| 105 |

+

- Set14 - [Zeyde et al. (2010)](https://huggingface.co/datasets/eugenesiow/Set14)

|

| 106 |

+

- BSD100 - [Martin et al. (2001)](https://huggingface.co/datasets/eugenesiow/BSD100)

|

| 107 |

+

- Urban100 - [Huang et al. (2015)](https://huggingface.co/datasets/eugenesiow/Urban100)

|

| 108 |

+

|

| 109 |

+

The results columns below are represented below as `PSNR/SSIM`. They are compared against a Bicubic baseline.

|

| 110 |

+

|

| 111 |

+

|Dataset |Scale |Bicubic |pan |

|

| 112 |

+

|--- |--- |--- |--- |

|

| 113 |

+

|Set5 |2x |33.64/0.9292 |**37.77/0.9599** |

|

| 114 |

+

|Set5 |3x |30.39/0.8678 |**** |

|

| 115 |

+

|Set5 |4x |28.42/0.8101 |**31.92/0.8915** |

|

| 116 |

+

|Set14 |2x |30.22/0.8683 |**33.42/0.9162** |

|

| 117 |

+

|Set14 |3x |27.53/0.7737 |**** |

|

| 118 |

+

|Set14 |4x |25.99/0.7023 |**28.57/0.7802** |

|

| 119 |

+

|BSD100 |2x |29.55/0.8425 |**33.6/0.9235** |

|

| 120 |

+

|BSD100 |3x |27.20/0.7382 |**** |

|

| 121 |

+

|BSD100 |4x |25.96/0.6672 |**28.35/0.7595** |

|

| 122 |

+

|Urban100 |2x |26.66/0.8408 |**31.31/0.9197** |

|

| 123 |

+

|Urban100 |3x | |**** |

|

| 124 |

+

|Urban100 |4x |23.14/0.6573 |**25.63/0.7692** |

|

| 125 |

+

|

| 126 |

+

|

| 127 |

+

|

| 128 |

+

You can find a notebook to easily run evaluation on pretrained models below:

|

| 129 |

+

|

| 130 |

+

[](https://colab.research.google.com/github/eugenesiow/super-image-notebooks/blob/master/notebooks/Evaluate_Pretrained_super_image_Models.ipynb "Open in Colab")

|

| 131 |

+

|

| 132 |

+

## BibTeX entry and citation info

|

| 133 |

+

```bibtex

|

| 134 |

+

@misc{zhao2020efficient,

|

| 135 |

+

title={Efficient Image Super-Resolution Using Pixel Attention},

|

| 136 |

+

author={Hengyuan Zhao and Xiangtao Kong and Jingwen He and Yu Qiao and Chao Dong},

|

| 137 |

+

year={2020},

|

| 138 |

+

eprint={2010.01073},

|

| 139 |

+

archivePrefix={arXiv},

|

| 140 |

+

primaryClass={eess.IV}

|

| 141 |

+

}

|

| 142 |

+

```

|

config.json

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bam": false,

|

| 3 |

+

"data_parallel": false,

|

| 4 |

+

"in_nc": 3,

|

| 5 |

+

"model_type": "PAN",

|

| 6 |

+

"nb": 16,

|

| 7 |

+

"nf": 40,

|

| 8 |

+

"out_nc": 3,

|

| 9 |

+

"unf": 24

|

| 10 |

+

}

|

images/pan_2_4_compare.png

ADDED

|

images/pan_4_4_compare.png

ADDED

|

pytorch_model_2x.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8fc18d9e39da7734661b8db9f1b024f874d5ba74ef39b5963f9f534443245beb

|

| 3 |

+

size 1098409

|

pytorch_model_4x.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5ab3ae83d4aed20c624cc06908c7a6a587a602ed8b387d44cabfa1f8a1354cc9

|

| 3 |

+

size 1144413

|