---

license: llama3.2

language:

- en

base_model:

- meta-llama/Llama-3.2-3B-Instruct

tags:

- merge

widget:

- text: "Eximius_Persona_5B"

output:

url: https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B/resolve/main/Images/Eximius_Persona_5B.png

---

Eximius_Persona_5B

---

---

---

I wanted to create a model with an **exceptional** capacity for using varied speech patterns and **fresh** role-play takes. The model had to have a unique personality, not on a surface level but on the inside, **for real**. Unfortunately, SFT alone just didn't cut it. And I had only 16GB of VRAM at the time. Oh, and I wanted it to be small enough to be viable for phones and to be able to give a fight to larger models while at it. If only there was a magical way to do it.

**Merges**. Merges are quite unique. In the early days, they were considered "fake." Clearly, there's no such thing as merges. Where are the papers? No papers? Then it's clearly impossible. "Mathematically impossible." Simply preposterous. To mix layers and hope for a coherent output? What nonsense!

And yet, they were **real**. Undi95 made some of the earliest merges I can remember, and the "LLAMA2 Era" was truly amazing and innovative thanks to them. Cool stuff like Tiefighter was being made, and eventually the time tested Midnight-Miqu-70B (v1.5 is my personal favorite).

Merges are an interesting thing, as they affect LLMs in a way that is currently **impossible** to reproduce using **SFT** (or any 'SOTA' technique). One of the plagues we have today, while we have orders of magnitude smarter LLMs, is **GPTisms** and **predictability**. Merges can potentially 'solve' that. How? In short, if you physically tear neurons (**passthrough** brain surgery) while you somehow manage to keep the model coherent enough, and if you're lucky, it can even follows instructions- then magical stuff begins to happen.

Magic, because it's **not** an exact science, there's some art to it, as it is done with a lot of **intuition**. GPTisms are patterns that the model really **really** "wants" to follow, it's quite hard to dissuade it. But if you yeet a couple of layers and rearrange them, boy does it get hard to spew those shivers down the spine... and instead the model starts spewing stuff that it was never intended to. It breaks its patterns and introduces some healthy chaos into the mix.

This model, **Eximius_Persona_5B**, is the result of multiple merges, that have been tuned, then merged again, then... for many times and iterations. The base was LLAMA 3.2 3B and I focused on achieving the following **4 traits**, in that specific order:

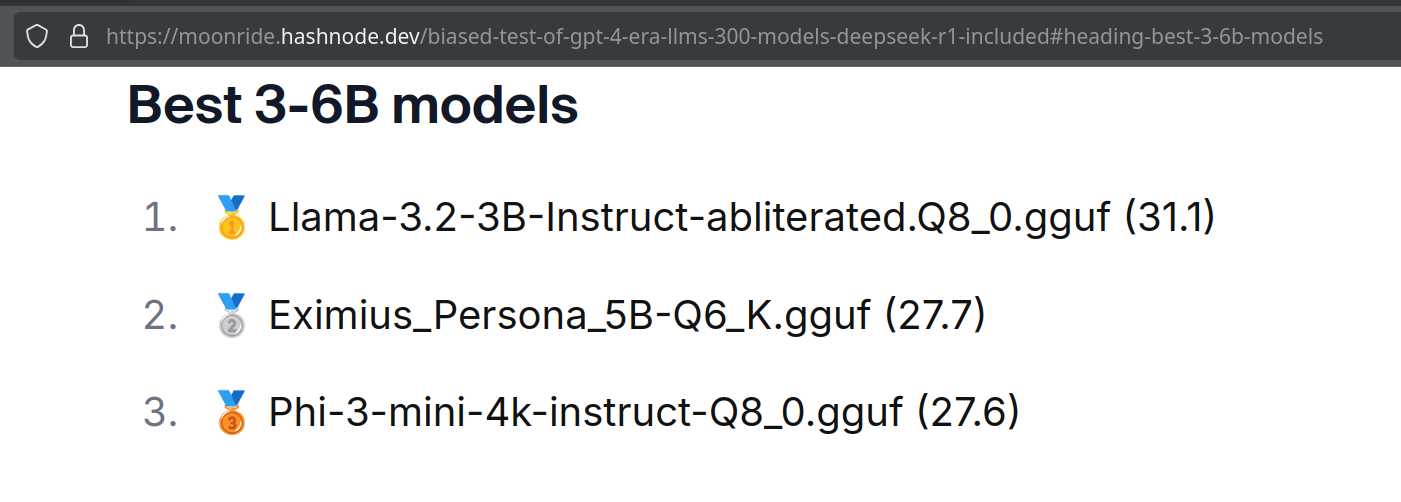

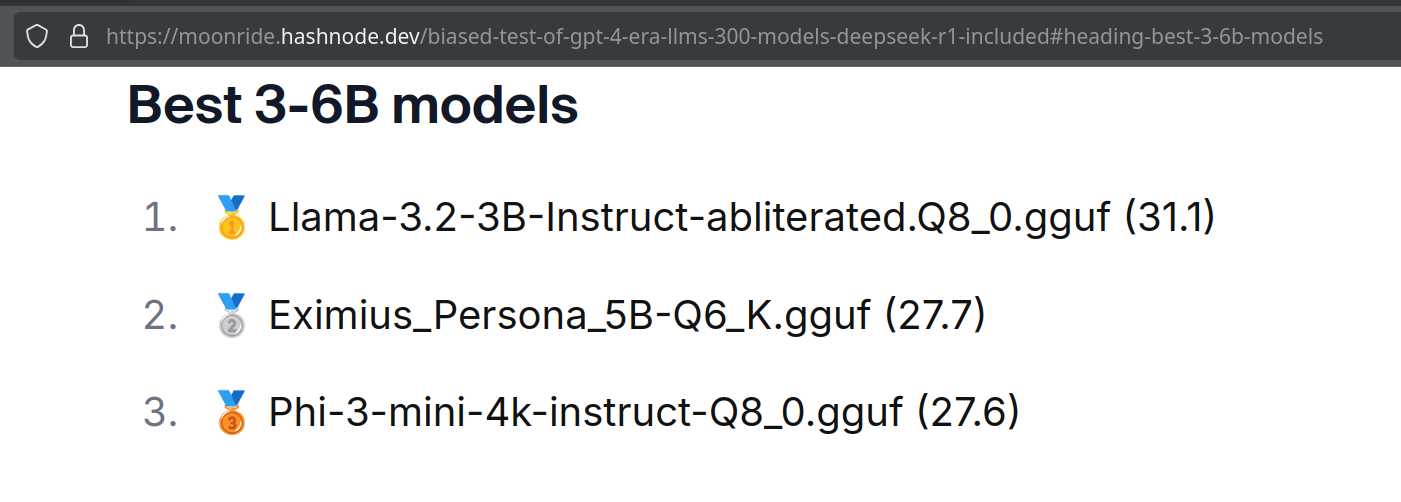

- **2nd Highest rated model** in the 3-6B category according to a closed external benchmark. See details at the buttom of the page.

- Varied speech patterns

- Roleplay ability

- Long context coherency

- Instruction following

For me, getting varied speech patterns was more important than instruction following, for instruction following we got API models, or LLAMA 3.3. Many models are excellent assistants, yet they all sound pretty much the same.

I also wanted to make use of my **4090m 16GB** while my workstation crunches **Phi-4'** brain. Making a nice 5B model aligns with my goal of making AI accessible and fun for everyone, and hence **Eximius_Persona_5B** was born. Let this also be a call to action for more people to make AI models, you don't have to have multiple GPUs or spend a fortune on the cloud (although that definitely opens up options), you can do plenty with a mere 16GB of VRAM. And in case 16GB seems out of reach too, I should mention that Google Collab gives access to a free T4.

I uploaded a more funky, less stable, and thiccer version of Eximius_Persona to my prototyping org here:

[Eximius_Persona with 84 Layers from various checkpoints](https://huggingface.co/Sicarius-Prototyping/Eximius_Persona_84L)

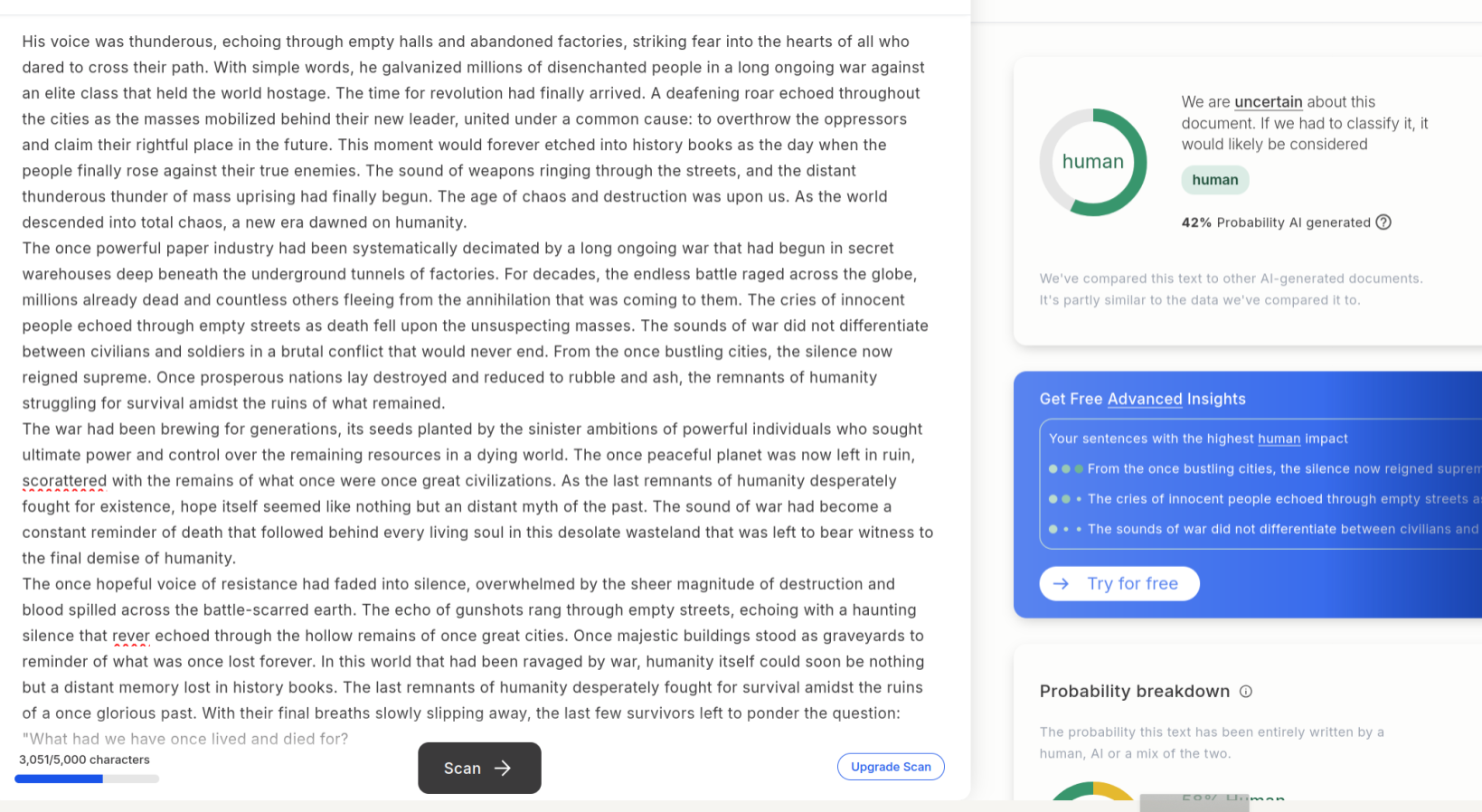

(from some early tests, occasionally it outputs stories that fool GPTZERO that it was written by a human- **60% human**, 40% AI with a lucky roll)

---

---

---

I wanted to create a model with an **exceptional** capacity for using varied speech patterns and **fresh** role-play takes. The model had to have a unique personality, not on a surface level but on the inside, **for real**. Unfortunately, SFT alone just didn't cut it. And I had only 16GB of VRAM at the time. Oh, and I wanted it to be small enough to be viable for phones and to be able to give a fight to larger models while at it. If only there was a magical way to do it.

**Merges**. Merges are quite unique. In the early days, they were considered "fake." Clearly, there's no such thing as merges. Where are the papers? No papers? Then it's clearly impossible. "Mathematically impossible." Simply preposterous. To mix layers and hope for a coherent output? What nonsense!

And yet, they were **real**. Undi95 made some of the earliest merges I can remember, and the "LLAMA2 Era" was truly amazing and innovative thanks to them. Cool stuff like Tiefighter was being made, and eventually the time tested Midnight-Miqu-70B (v1.5 is my personal favorite).

Merges are an interesting thing, as they affect LLMs in a way that is currently **impossible** to reproduce using **SFT** (or any 'SOTA' technique). One of the plagues we have today, while we have orders of magnitude smarter LLMs, is **GPTisms** and **predictability**. Merges can potentially 'solve' that. How? In short, if you physically tear neurons (**passthrough** brain surgery) while you somehow manage to keep the model coherent enough, and if you're lucky, it can even follows instructions- then magical stuff begins to happen.

Magic, because it's **not** an exact science, there's some art to it, as it is done with a lot of **intuition**. GPTisms are patterns that the model really **really** "wants" to follow, it's quite hard to dissuade it. But if you yeet a couple of layers and rearrange them, boy does it get hard to spew those shivers down the spine... and instead the model starts spewing stuff that it was never intended to. It breaks its patterns and introduces some healthy chaos into the mix.

This model, **Eximius_Persona_5B**, is the result of multiple merges, that have been tuned, then merged again, then... for many times and iterations. The base was LLAMA 3.2 3B and I focused on achieving the following **4 traits**, in that specific order:

- **2nd Highest rated model** in the 3-6B category according to a closed external benchmark. See details at the buttom of the page.

- Varied speech patterns

- Roleplay ability

- Long context coherency

- Instruction following

For me, getting varied speech patterns was more important than instruction following, for instruction following we got API models, or LLAMA 3.3. Many models are excellent assistants, yet they all sound pretty much the same.

I also wanted to make use of my **4090m 16GB** while my workstation crunches **Phi-4'** brain. Making a nice 5B model aligns with my goal of making AI accessible and fun for everyone, and hence **Eximius_Persona_5B** was born. Let this also be a call to action for more people to make AI models, you don't have to have multiple GPUs or spend a fortune on the cloud (although that definitely opens up options), you can do plenty with a mere 16GB of VRAM. And in case 16GB seems out of reach too, I should mention that Google Collab gives access to a free T4.

I uploaded a more funky, less stable, and thiccer version of Eximius_Persona to my prototyping org here:

[Eximius_Persona with 84 Layers from various checkpoints](https://huggingface.co/Sicarius-Prototyping/Eximius_Persona_84L)

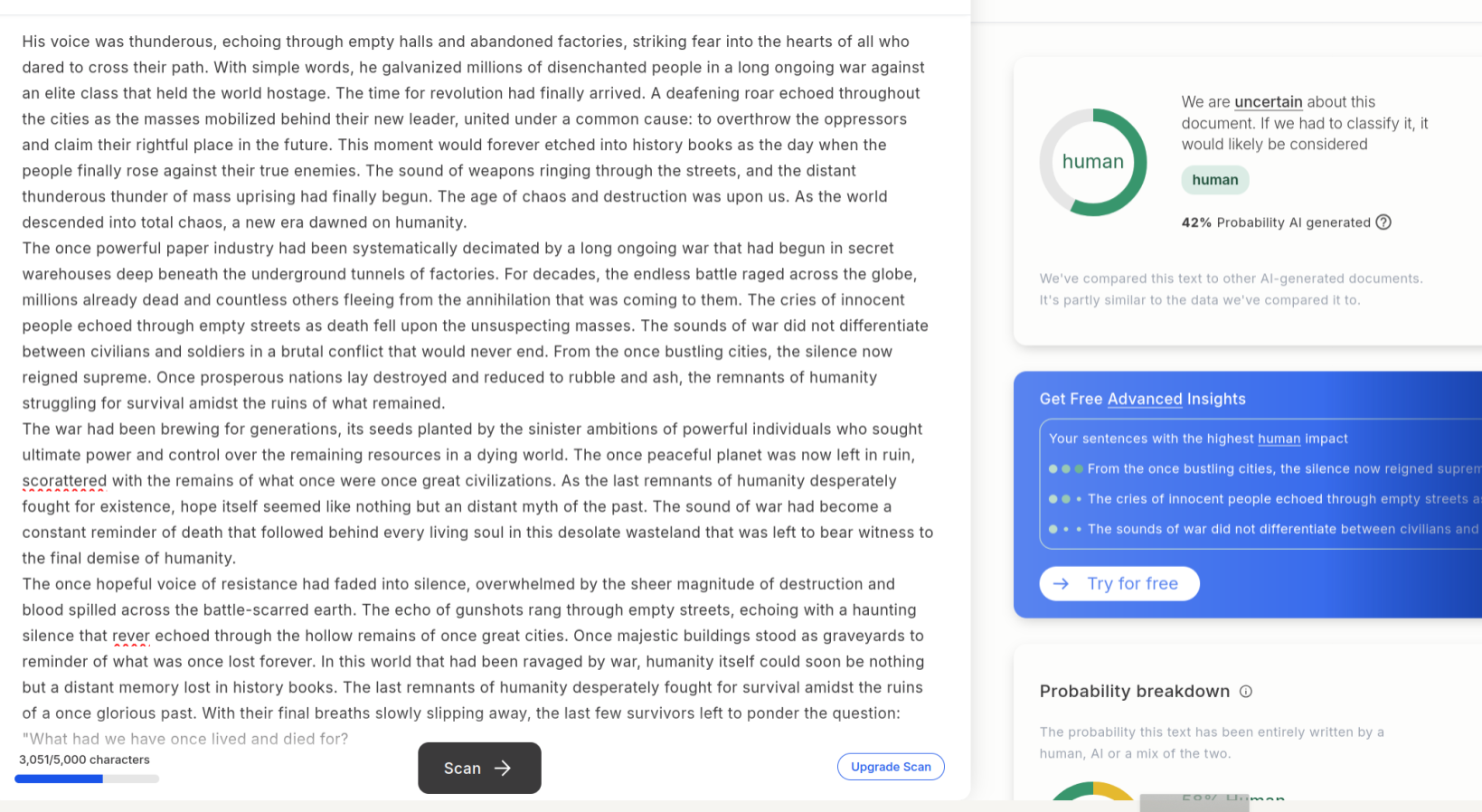

(from some early tests, occasionally it outputs stories that fool GPTZERO that it was written by a human- **60% human**, 40% AI with a lucky roll)

See example:

---

### TL;DR

- **Fun & Fresh Roleplay** flavour.

- **Interesting speech patterns** in creative writing.

- **Good long context coherency** in Roleplay.

- **Occasionally** outputs quite **human like** stories.

- **50 Layers** LLAMA 3.2, fully coherent.

- **Strong performance** in general for a **5B model**.

### Important: Make sure to use the correct settings!

[Assistant settings](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B#recommended-settings-for-assistant-mode)

[Roleplay settings](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B#recommended-settings-for-roleplay-mode)

---

## Available quantizations:

- Original: [FP16](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B)

- GGUF: [Static Quants](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B_GGUF) | [iMatrix_GGUF](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B_iMatrix)

- EXL2: [3.5 bpw](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B-3.5bpw) | [4.0 bpw](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B-4.0bpw) | [5.0 bpw](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B-5.0bpw) | [6.0 bpw](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B-6.0bpw) | [7.0 bpw](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B-7.0bpw) | [8.0 bpw](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B-8.0bpw)

- Specialized: [FP8](https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B_FP8)

---

## Model Details

- Intended use: **Role-Play**, **Creative Writing**, General Tasks.

- Censorship level: Medium

- **5 / 10** (10 completely uncensored)

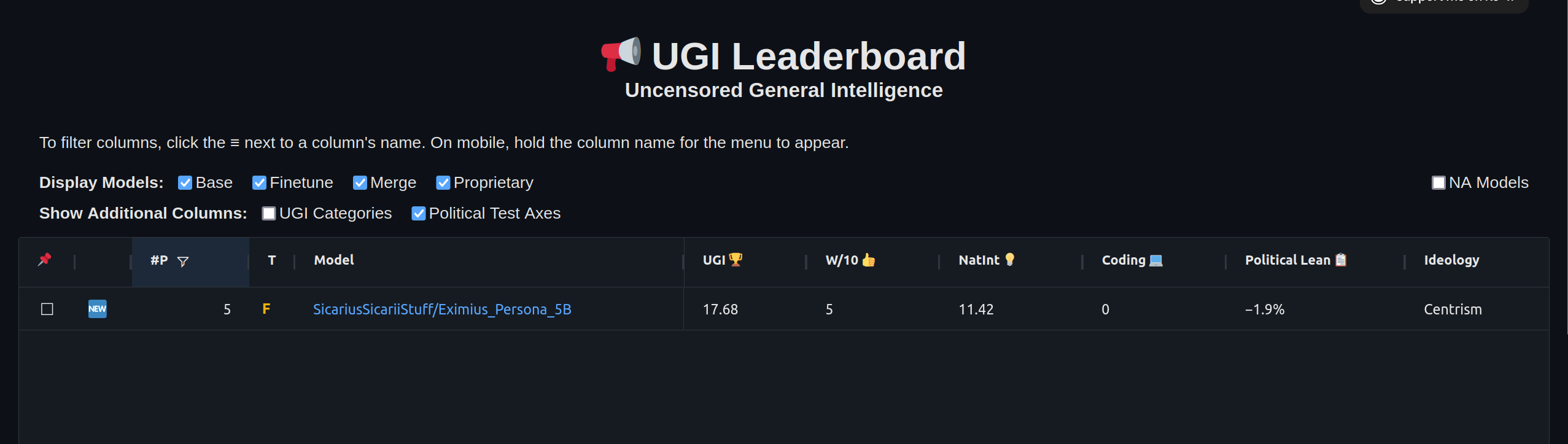

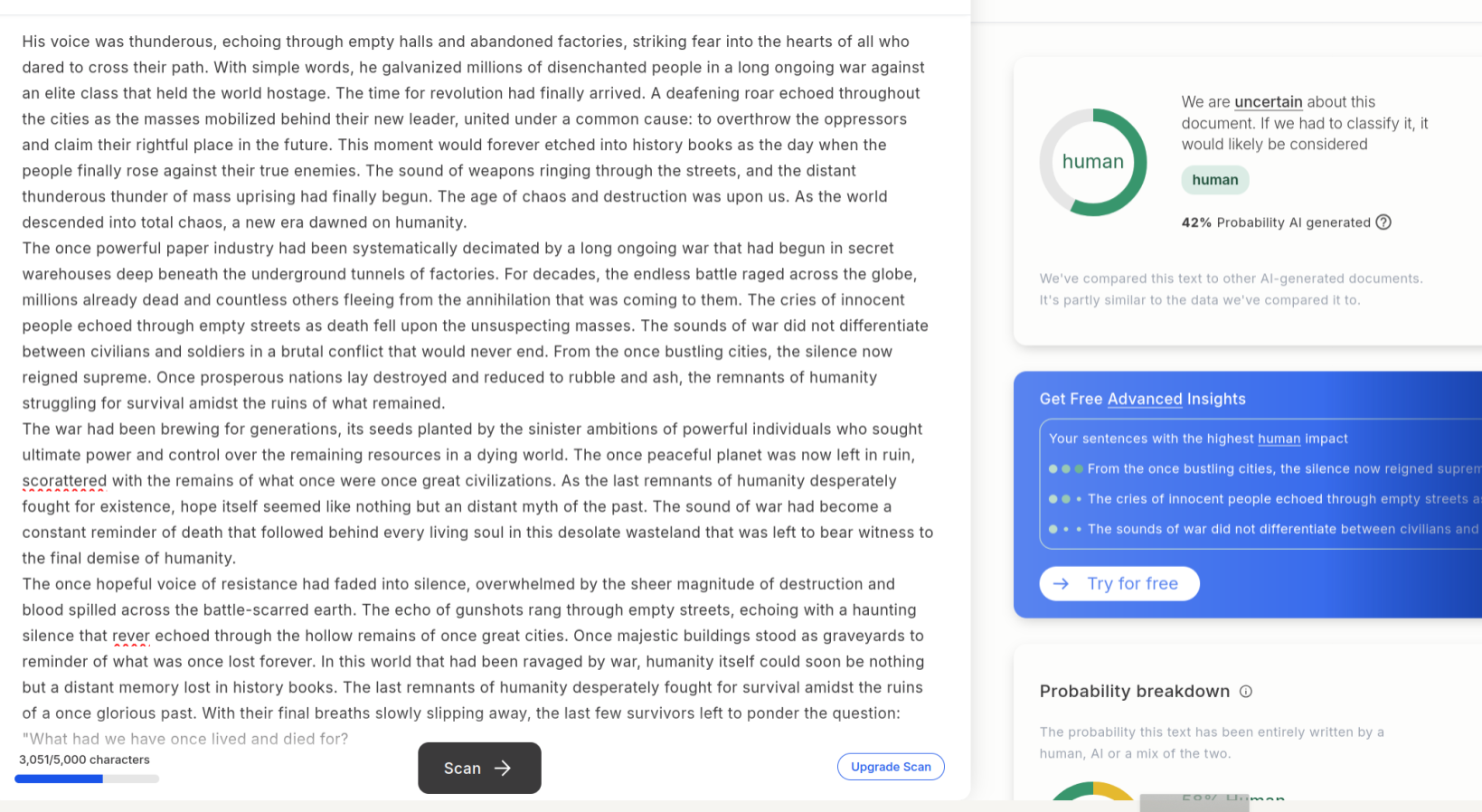

## UGI score:

### Don't use it for coding :)

---

# Regarding the format:

It is **HIGHLY RECOMMENDED** to use the **Roleplay \ Adventure format the model was trained on**, see the examples below for syntax. It allows for a **very fast and easy** writing of character cards with **minimal amount of tokens**. It's a modification of an old-skool CAI style format I call **SICAtxt** (**S**imple, **I**nexpensive **C**haracter **A**ttributes plain-text):

---

## **SICAtxt** for **roleplay**:

```

X's Persona: X is a .....

Traits:

Likes:

Dislikes:

Quirks:

Goals:

Dialogue example

```

## **SICAtxt** for **Adventure:**

```

Adventure:

$World_Setting:

$Scenario:

```

---

# Model instruction template: Llama-3-Instruct

```

<|begin_of_text|><|start_header_id|>system<|end_header_id|>

{system_prompt}<|eot_id|><|start_header_id|>user<|end_header_id|>

{input}<|eot_id|><|start_header_id|>assistant<|end_header_id|>

{output}<|eot_id|>

```

---

### Don't use it for coding :)

---

# Regarding the format:

It is **HIGHLY RECOMMENDED** to use the **Roleplay \ Adventure format the model was trained on**, see the examples below for syntax. It allows for a **very fast and easy** writing of character cards with **minimal amount of tokens**. It's a modification of an old-skool CAI style format I call **SICAtxt** (**S**imple, **I**nexpensive **C**haracter **A**ttributes plain-text):

---

## **SICAtxt** for **roleplay**:

```

X's Persona: X is a .....

Traits:

Likes:

Dislikes:

Quirks:

Goals:

Dialogue example

```

## **SICAtxt** for **Adventure:**

```

Adventure:

$World_Setting:

$Scenario:

```

---

# Model instruction template: Llama-3-Instruct

```

<|begin_of_text|><|start_header_id|>system<|end_header_id|>

{system_prompt}<|eot_id|><|start_header_id|>user<|end_header_id|>

{input}<|eot_id|><|start_header_id|>assistant<|end_header_id|>

{output}<|eot_id|>

```

---

Roleplay format: Classic Internet RP

```

*action* speech *narration*

```

### The model is pretty smart, so it might handle other formats as well, but it was trained and tested specifically with the classic internet RP style in mind.

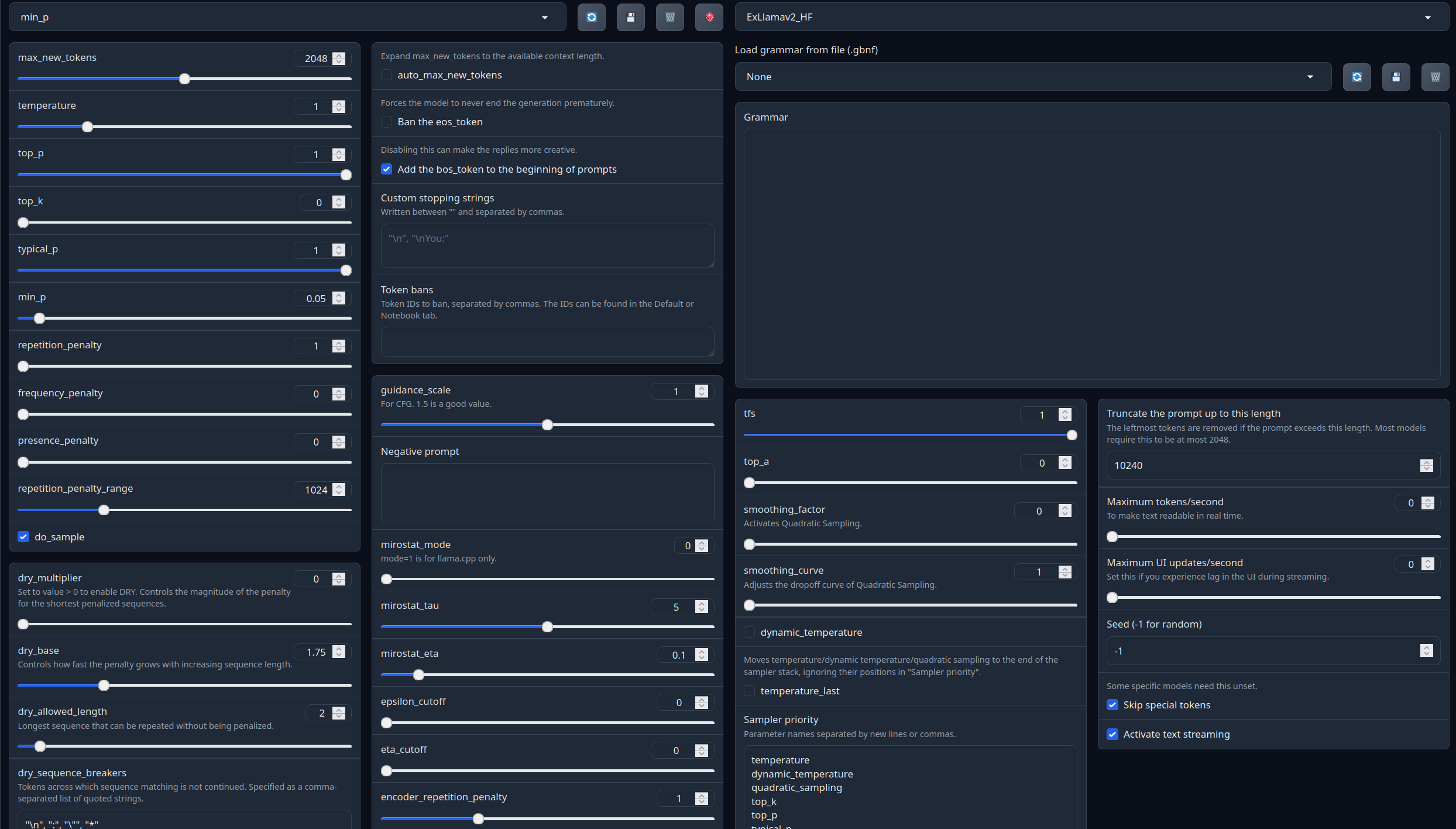

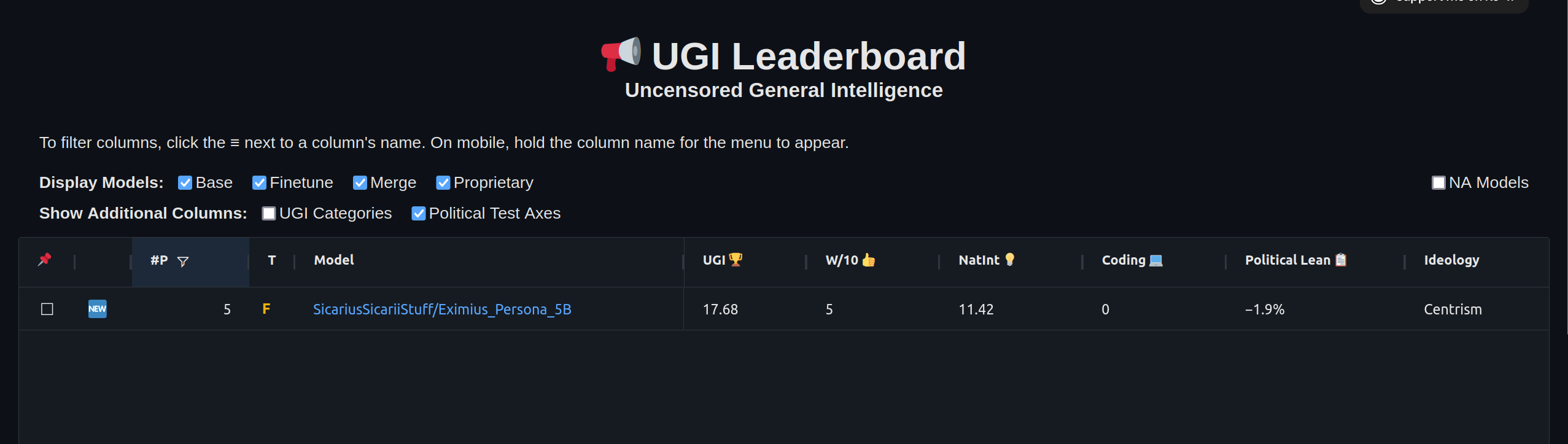

## Recommended settings for assistant mode

Full generation settings: Debug Deterministic.

Full generation settings: min_p.

---

## Recommended settings for Roleplay mode

Roleplay settings:.

A good repetition_penalty range is between 1.12 - 1.15, feel free to experiment.

With these settings, each output message should be neatly displayed in 1 - 3 paragraphs, 1 - 2 is the most common. A single paragraph will be output as a response to a simple message ("What was your name again?").

min_P for RP works too but is more likely to put everything under one large paragraph, instead of a neatly formatted short one. Feel free to switch in between.

(Open the image in a new window to better see the full details)

```

temperature: 0.8

top_p: 0.95

top_k: 25

typical_p: 1

min_p: 0

repetition_penalty: 1.12

repetition_penalty_range: 1024

```

```

temperature: 0.8

top_p: 0.95

top_k: 25

typical_p: 1

min_p: 0

repetition_penalty: 1.12

repetition_penalty_range: 1024

```

---

**Other recommended generation Presets:**

Midnight Enigma

```

max_new_tokens: 512

temperature: 0.98

top_p: 0.37

top_k: 100

typical_p: 1

min_p: 0

repetition_penalty: 1.18

do_sample: True

```

Divine Intellect

```

max_new_tokens: 512

temperature: 1.31

top_p: 0.14

top_k: 49

typical_p: 1

min_p: 0

repetition_penalty: 1.17

do_sample: True

```

simple-1

```

max_new_tokens: 512

temperature: 0.7

top_p: 0.9

top_k: 20

typical_p: 1

min_p: 0

repetition_penalty: 1.15

do_sample: True

```

---

Your support = more models

My Ko-fi page (Click here)

---

## Benchmarks

| Metric |Value|

|-------------------|----:|

|Avg. |21.78|

|IFEval (0-Shot) |65.60|

|BBH (3-Shot) |22.20|

|MATH Lvl 5 (4-Shot)| 9.89|

|GPQA (0-shot) | 1.90|

|MuSR (0-shot) | 7.33|

|MMLU-PRO (5-shot) |23.78|

---

# Additional benchmarks

On the **17th of February, 2025**, I became aware that the model was ranked as the **2nd place in the world** among **3-6B** models, in a closed external benchmark.

Bnechmarked on the following site:

```

https://moonride.hashnode.dev/biased-test-of-gpt-4-era-llms-300-models-deepseek-r1-included

```

---

## Citation Information

```

@llm{Eximius_Persona_5B,

author = {SicariusSicariiStuff},

title = {Eximius_Persona_5B},

year = {2025},

publisher = {Hugging Face},

url = {https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B}

}

```

---

## Other stuff

- [SLOP_Detector](https://github.com/SicariusSicariiStuff/SLOP_Detector) Nuke GPTisms, with SLOP detector.

- [LLAMA-3_8B_Unaligned](https://huggingface.co/SicariusSicariiStuff/LLAMA-3_8B_Unaligned) The grand project that started it all.

- [Blog and updates (Archived)](https://huggingface.co/SicariusSicariiStuff/Blog_And_Updates) Some updates, some rambles, sort of a mix between a diary and a blog.

---

## Citation Information

```

@llm{Eximius_Persona_5B,

author = {SicariusSicariiStuff},

title = {Eximius_Persona_5B},

year = {2025},

publisher = {Hugging Face},

url = {https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B}

}

```

---

## Other stuff

- [SLOP_Detector](https://github.com/SicariusSicariiStuff/SLOP_Detector) Nuke GPTisms, with SLOP detector.

- [LLAMA-3_8B_Unaligned](https://huggingface.co/SicariusSicariiStuff/LLAMA-3_8B_Unaligned) The grand project that started it all.

- [Blog and updates (Archived)](https://huggingface.co/SicariusSicariiStuff/Blog_And_Updates) Some updates, some rambles, sort of a mix between a diary and a blog.

---

---

---

I wanted to create a model with an **exceptional** capacity for using varied speech patterns and **fresh** role-play takes. The model had to have a unique personality, not on a surface level but on the inside, **for real**. Unfortunately, SFT alone just didn't cut it. And I had only 16GB of VRAM at the time. Oh, and I wanted it to be small enough to be viable for phones and to be able to give a fight to larger models while at it. If only there was a magical way to do it.

**Merges**. Merges are quite unique. In the early days, they were considered "fake." Clearly, there's no such thing as merges. Where are the papers? No papers? Then it's clearly impossible. "Mathematically impossible." Simply preposterous. To mix layers and hope for a coherent output? What nonsense!

And yet, they were **real**. Undi95 made some of the earliest merges I can remember, and the "LLAMA2 Era" was truly amazing and innovative thanks to them. Cool stuff like Tiefighter was being made, and eventually the time tested Midnight-Miqu-70B (v1.5 is my personal favorite).

Merges are an interesting thing, as they affect LLMs in a way that is currently **impossible** to reproduce using **SFT** (or any 'SOTA' technique). One of the plagues we have today, while we have orders of magnitude smarter LLMs, is **GPTisms** and **predictability**. Merges can potentially 'solve' that. How? In short, if you physically tear neurons (**passthrough** brain surgery) while you somehow manage to keep the model coherent enough, and if you're lucky, it can even follows instructions- then magical stuff begins to happen.

Magic, because it's **not** an exact science, there's some art to it, as it is done with a lot of **intuition**. GPTisms are patterns that the model really **really** "wants" to follow, it's quite hard to dissuade it. But if you yeet a couple of layers and rearrange them, boy does it get hard to spew those shivers down the spine... and instead the model starts spewing stuff that it was never intended to. It breaks its patterns and introduces some healthy chaos into the mix.

This model, **Eximius_Persona_5B**, is the result of multiple merges, that have been tuned, then merged again, then... for many times and iterations. The base was LLAMA 3.2 3B and I focused on achieving the following **4 traits**, in that specific order:

- **2nd Highest rated model** in the 3-6B category according to a closed external benchmark. See details at the buttom of the page.

- Varied speech patterns

- Roleplay ability

- Long context coherency

- Instruction following

For me, getting varied speech patterns was more important than instruction following, for instruction following we got API models, or LLAMA 3.3. Many models are excellent assistants, yet they all sound pretty much the same.

I also wanted to make use of my **4090m 16GB** while my workstation crunches **Phi-4'** brain. Making a nice 5B model aligns with my goal of making AI accessible and fun for everyone, and hence **Eximius_Persona_5B** was born. Let this also be a call to action for more people to make AI models, you don't have to have multiple GPUs or spend a fortune on the cloud (although that definitely opens up options), you can do plenty with a mere 16GB of VRAM. And in case 16GB seems out of reach too, I should mention that Google Collab gives access to a free T4.

I uploaded a more funky, less stable, and thiccer version of Eximius_Persona to my prototyping org here:

[Eximius_Persona with 84 Layers from various checkpoints](https://huggingface.co/Sicarius-Prototyping/Eximius_Persona_84L)

(from some early tests, occasionally it outputs stories that fool GPTZERO that it was written by a human- **60% human**, 40% AI with a lucky roll)

---

---

---

I wanted to create a model with an **exceptional** capacity for using varied speech patterns and **fresh** role-play takes. The model had to have a unique personality, not on a surface level but on the inside, **for real**. Unfortunately, SFT alone just didn't cut it. And I had only 16GB of VRAM at the time. Oh, and I wanted it to be small enough to be viable for phones and to be able to give a fight to larger models while at it. If only there was a magical way to do it.

**Merges**. Merges are quite unique. In the early days, they were considered "fake." Clearly, there's no such thing as merges. Where are the papers? No papers? Then it's clearly impossible. "Mathematically impossible." Simply preposterous. To mix layers and hope for a coherent output? What nonsense!

And yet, they were **real**. Undi95 made some of the earliest merges I can remember, and the "LLAMA2 Era" was truly amazing and innovative thanks to them. Cool stuff like Tiefighter was being made, and eventually the time tested Midnight-Miqu-70B (v1.5 is my personal favorite).

Merges are an interesting thing, as they affect LLMs in a way that is currently **impossible** to reproduce using **SFT** (or any 'SOTA' technique). One of the plagues we have today, while we have orders of magnitude smarter LLMs, is **GPTisms** and **predictability**. Merges can potentially 'solve' that. How? In short, if you physically tear neurons (**passthrough** brain surgery) while you somehow manage to keep the model coherent enough, and if you're lucky, it can even follows instructions- then magical stuff begins to happen.

Magic, because it's **not** an exact science, there's some art to it, as it is done with a lot of **intuition**. GPTisms are patterns that the model really **really** "wants" to follow, it's quite hard to dissuade it. But if you yeet a couple of layers and rearrange them, boy does it get hard to spew those shivers down the spine... and instead the model starts spewing stuff that it was never intended to. It breaks its patterns and introduces some healthy chaos into the mix.

This model, **Eximius_Persona_5B**, is the result of multiple merges, that have been tuned, then merged again, then... for many times and iterations. The base was LLAMA 3.2 3B and I focused on achieving the following **4 traits**, in that specific order:

- **2nd Highest rated model** in the 3-6B category according to a closed external benchmark. See details at the buttom of the page.

- Varied speech patterns

- Roleplay ability

- Long context coherency

- Instruction following

For me, getting varied speech patterns was more important than instruction following, for instruction following we got API models, or LLAMA 3.3. Many models are excellent assistants, yet they all sound pretty much the same.

I also wanted to make use of my **4090m 16GB** while my workstation crunches **Phi-4'** brain. Making a nice 5B model aligns with my goal of making AI accessible and fun for everyone, and hence **Eximius_Persona_5B** was born. Let this also be a call to action for more people to make AI models, you don't have to have multiple GPUs or spend a fortune on the cloud (although that definitely opens up options), you can do plenty with a mere 16GB of VRAM. And in case 16GB seems out of reach too, I should mention that Google Collab gives access to a free T4.

I uploaded a more funky, less stable, and thiccer version of Eximius_Persona to my prototyping org here:

[Eximius_Persona with 84 Layers from various checkpoints](https://huggingface.co/Sicarius-Prototyping/Eximius_Persona_84L)

(from some early tests, occasionally it outputs stories that fool GPTZERO that it was written by a human- **60% human**, 40% AI with a lucky roll)

### Don't use it for coding :)

---

# Regarding the format:

It is **HIGHLY RECOMMENDED** to use the **Roleplay \ Adventure format the model was trained on**, see the examples below for syntax. It allows for a **very fast and easy** writing of character cards with **minimal amount of tokens**. It's a modification of an old-skool CAI style format I call **SICAtxt** (**S**imple, **I**nexpensive **C**haracter **A**ttributes plain-text):

---

## **SICAtxt** for **roleplay**:

```

X's Persona: X is a .....

Traits:

Likes:

Dislikes:

Quirks:

Goals:

Dialogue example

```

## **SICAtxt** for **Adventure:**

```

Adventure:

### Don't use it for coding :)

---

# Regarding the format:

It is **HIGHLY RECOMMENDED** to use the **Roleplay \ Adventure format the model was trained on**, see the examples below for syntax. It allows for a **very fast and easy** writing of character cards with **minimal amount of tokens**. It's a modification of an old-skool CAI style format I call **SICAtxt** (**S**imple, **I**nexpensive **C**haracter **A**ttributes plain-text):

---

## **SICAtxt** for **roleplay**:

```

X's Persona: X is a .....

Traits:

Likes:

Dislikes:

Quirks:

Goals:

Dialogue example

```

## **SICAtxt** for **Adventure:**

```

Adventure:

```

temperature: 0.8

top_p: 0.95

top_k: 25

typical_p: 1

min_p: 0

repetition_penalty: 1.12

repetition_penalty_range: 1024

```

```

temperature: 0.8

top_p: 0.95

top_k: 25

typical_p: 1

min_p: 0

repetition_penalty: 1.12

repetition_penalty_range: 1024

```

---

## Citation Information

```

@llm{Eximius_Persona_5B,

author = {SicariusSicariiStuff},

title = {Eximius_Persona_5B},

year = {2025},

publisher = {Hugging Face},

url = {https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B}

}

```

---

## Other stuff

- [SLOP_Detector](https://github.com/SicariusSicariiStuff/SLOP_Detector) Nuke GPTisms, with SLOP detector.

- [LLAMA-3_8B_Unaligned](https://huggingface.co/SicariusSicariiStuff/LLAMA-3_8B_Unaligned) The grand project that started it all.

- [Blog and updates (Archived)](https://huggingface.co/SicariusSicariiStuff/Blog_And_Updates) Some updates, some rambles, sort of a mix between a diary and a blog.

---

## Citation Information

```

@llm{Eximius_Persona_5B,

author = {SicariusSicariiStuff},

title = {Eximius_Persona_5B},

year = {2025},

publisher = {Hugging Face},

url = {https://huggingface.co/SicariusSicariiStuff/Eximius_Persona_5B}

}

```

---

## Other stuff

- [SLOP_Detector](https://github.com/SicariusSicariiStuff/SLOP_Detector) Nuke GPTisms, with SLOP detector.

- [LLAMA-3_8B_Unaligned](https://huggingface.co/SicariusSicariiStuff/LLAMA-3_8B_Unaligned) The grand project that started it all.

- [Blog and updates (Archived)](https://huggingface.co/SicariusSicariiStuff/Blog_And_Updates) Some updates, some rambles, sort of a mix between a diary and a blog.