Add files using upload-large-folder tool

Browse files- .gitattributes +1 -0

- v0-20250513-030424/args.json +335 -0

- v0-20250513-030424/checkpoint-9/added_tokens.json +24 -0

- v0-20250513-030424/checkpoint-9/args.json +335 -0

- v0-20250513-030424/checkpoint-9/chat_template.jinja +7 -0

- v0-20250513-030424/checkpoint-9/config.json +574 -0

- v0-20250513-030424/checkpoint-9/generation_config.json +4 -0

- v0-20250513-030424/checkpoint-9/merges.txt +0 -0

- v0-20250513-030424/checkpoint-9/model-00001-of-00003.safetensors +3 -0

- v0-20250513-030424/checkpoint-9/model-00002-of-00003.safetensors +3 -0

- v0-20250513-030424/checkpoint-9/model-00003-of-00003.safetensors +3 -0

- v0-20250513-030424/checkpoint-9/model.safetensors.index.json +0 -0

- v0-20250513-030424/checkpoint-9/optimizer.pt +3 -0

- v0-20250513-030424/checkpoint-9/preprocessor_config.json +31 -0

- v0-20250513-030424/checkpoint-9/rng_state.pth +3 -0

- v0-20250513-030424/checkpoint-9/scheduler.pt +3 -0

- v0-20250513-030424/checkpoint-9/special_tokens_map.json +38 -0

- v0-20250513-030424/checkpoint-9/spk_dict.pt +3 -0

- v0-20250513-030424/checkpoint-9/tokenizer.json +3 -0

- v0-20250513-030424/checkpoint-9/tokenizer_config.json +222 -0

- v0-20250513-030424/checkpoint-9/trainer_state.json +63 -0

- v0-20250513-030424/checkpoint-9/training_args.bin +3 -0

- v0-20250513-030424/checkpoint-9/vocab.json +0 -0

- v0-20250513-030424/images/eval_loss.png +0 -0

- v0-20250513-030424/images/eval_runtime.png +0 -0

- v0-20250513-030424/images/eval_samples_per_second.png +0 -0

- v0-20250513-030424/images/eval_steps_per_second.png +0 -0

- v0-20250513-030424/images/eval_token_acc.png +0 -0

- v0-20250513-030424/images/train_epoch.png +0 -0

- v0-20250513-030424/images/train_grad_norm.png +0 -0

- v0-20250513-030424/images/train_learning_rate.png +0 -0

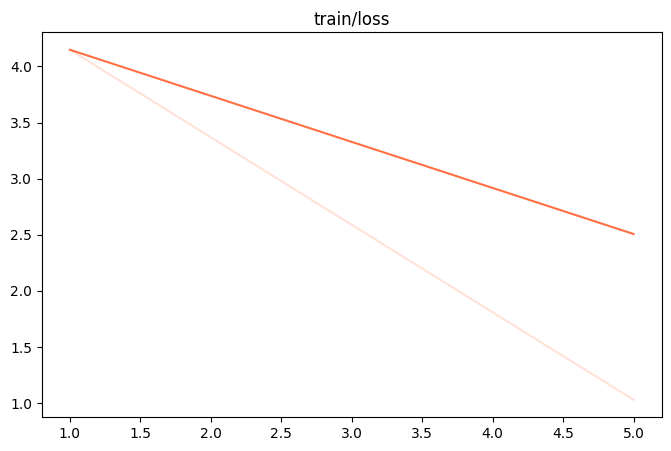

- v0-20250513-030424/images/train_loss.png +0 -0

- v0-20250513-030424/images/train_memory(GiB).png +0 -0

- v0-20250513-030424/images/train_token_acc.png +0 -0

- v0-20250513-030424/images/train_total_flos.png +0 -0

- v0-20250513-030424/images/train_train_loss.png +0 -0

- v0-20250513-030424/images/train_train_runtime.png +0 -0

- v0-20250513-030424/images/train_train_samples_per_second.png +0 -0

- v0-20250513-030424/images/train_train_speed(iter_s).png +0 -0

- v0-20250513-030424/images/train_train_steps_per_second.png +0 -0

- v0-20250513-030424/logging.jsonl +5 -0

- v0-20250513-030424/runs/events.out.tfevents.1747076673.dsw-239952-646df5498f-2dn6v.54937.0 +3 -0

- v0-20250513-030424/val_dataset.jsonl +1 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

v0-20250513-030424/checkpoint-9/tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

v0-20250513-030424/args.json

ADDED

|

@@ -0,0 +1,335 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"model": "/home/xj_data/jishengpeng/Code/Qwen2.5-Omni-3B",

|

| 3 |

+

"model_type": "qwen2_5_omni",

|

| 4 |

+

"model_revision": null,

|

| 5 |

+

"task_type": "causal_lm",

|

| 6 |

+

"torch_dtype": "bfloat16",

|

| 7 |

+

"attn_impl": null,

|

| 8 |

+

"num_labels": null,

|

| 9 |

+

"problem_type": null,

|

| 10 |

+

"rope_scaling": null,

|

| 11 |

+

"device_map": null,

|

| 12 |

+

"max_memory": {},

|

| 13 |

+

"local_repo_path": null,

|

| 14 |

+

"init_strategy": null,

|

| 15 |

+

"template": "qwen2_5_omni",

|

| 16 |

+

"system": null,

|

| 17 |

+

"max_length": null,

|

| 18 |

+

"truncation_strategy": "delete",

|

| 19 |

+

"max_pixels": null,

|

| 20 |

+

"agent_template": null,

|

| 21 |

+

"norm_bbox": null,

|

| 22 |

+

"response_prefix": null,

|

| 23 |

+

"padding_side": "right",

|

| 24 |

+

"loss_scale": "default",

|

| 25 |

+

"sequence_parallel_size": 1,

|

| 26 |

+

"use_chat_template": true,

|

| 27 |

+

"template_backend": "swift",

|

| 28 |

+

"dataset": [

|

| 29 |

+

"/home/xj_data/jishengpeng/InteractSpeech/ms-swift/dataset.json"

|

| 30 |

+

],

|

| 31 |

+

"val_dataset": [],

|

| 32 |

+

"split_dataset_ratio": 0.01,

|

| 33 |

+

"data_seed": 42,

|

| 34 |

+

"dataset_num_proc": 1,

|

| 35 |

+

"load_from_cache_file": false,

|

| 36 |

+

"dataset_shuffle": true,

|

| 37 |

+

"val_dataset_shuffle": false,

|

| 38 |

+

"streaming": false,

|

| 39 |

+

"interleave_prob": null,

|

| 40 |

+

"stopping_strategy": "first_exhausted",

|

| 41 |

+

"shuffle_buffer_size": 1000,

|

| 42 |

+

"download_mode": "reuse_dataset_if_exists",

|

| 43 |

+

"columns": {},

|

| 44 |

+

"strict": false,

|

| 45 |

+

"remove_unused_columns": true,

|

| 46 |

+

"model_name": [

|

| 47 |

+

null,

|

| 48 |

+

null

|

| 49 |

+

],

|

| 50 |

+

"model_author": [

|

| 51 |

+

null,

|

| 52 |

+

null

|

| 53 |

+

],

|

| 54 |

+

"custom_dataset_info": [],

|

| 55 |

+

"quant_method": null,

|

| 56 |

+

"quant_bits": null,

|

| 57 |

+

"hqq_axis": null,

|

| 58 |

+

"bnb_4bit_compute_dtype": "bfloat16",

|

| 59 |

+

"bnb_4bit_quant_type": "nf4",

|

| 60 |

+

"bnb_4bit_use_double_quant": true,

|

| 61 |

+

"bnb_4bit_quant_storage": null,

|

| 62 |

+

"max_new_tokens": 64,

|

| 63 |

+

"temperature": 0.0,

|

| 64 |

+

"top_k": null,

|

| 65 |

+

"top_p": null,

|

| 66 |

+

"repetition_penalty": null,

|

| 67 |

+

"num_beams": 1,

|

| 68 |

+

"stream": false,

|

| 69 |

+

"stop_words": [],

|

| 70 |

+

"logprobs": false,

|

| 71 |

+

"top_logprobs": null,

|

| 72 |

+

"ckpt_dir": null,

|

| 73 |

+

"lora_modules": [],

|

| 74 |

+

"tuner_backend": "peft",

|

| 75 |

+

"train_type": "full",

|

| 76 |

+

"adapters": [],

|

| 77 |

+

"external_plugins": [],

|

| 78 |

+

"seed": 42,

|

| 79 |

+

"model_kwargs": {},

|

| 80 |

+

"load_args": false,

|

| 81 |

+

"load_data_args": false,

|

| 82 |

+

"use_hf": false,

|

| 83 |

+

"hub_token": null,

|

| 84 |

+

"custom_register_path": [],

|

| 85 |

+

"ignore_args_error": false,

|

| 86 |

+

"use_swift_lora": false,

|

| 87 |

+

"output_dir": "/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424",

|

| 88 |

+

"overwrite_output_dir": false,

|

| 89 |

+

"do_train": false,

|

| 90 |

+

"do_eval": false,

|

| 91 |

+

"do_predict": false,

|

| 92 |

+

"eval_strategy": "steps",

|

| 93 |

+

"prediction_loss_only": false,

|

| 94 |

+

"per_device_train_batch_size": 2,

|

| 95 |

+

"per_device_eval_batch_size": 1,

|

| 96 |

+

"per_gpu_train_batch_size": null,

|

| 97 |

+

"per_gpu_eval_batch_size": null,

|

| 98 |

+

"gradient_accumulation_steps": null,

|

| 99 |

+

"eval_accumulation_steps": null,

|

| 100 |

+

"eval_delay": 0,

|

| 101 |

+

"torch_empty_cache_steps": null,

|

| 102 |

+

"learning_rate": 1e-05,

|

| 103 |

+

"weight_decay": 0.1,

|

| 104 |

+

"adam_beta1": 0.9,

|

| 105 |

+

"adam_beta2": 0.95,

|

| 106 |

+

"adam_epsilon": 1e-08,

|

| 107 |

+

"max_grad_norm": 1.0,

|

| 108 |

+

"num_train_epochs": 3.0,

|

| 109 |

+

"max_steps": -1,

|

| 110 |

+

"lr_scheduler_type": "cosine",

|

| 111 |

+

"lr_scheduler_kwargs": null,

|

| 112 |

+

"warmup_ratio": 0.0,

|

| 113 |

+

"warmup_steps": 0,

|

| 114 |

+

"log_level": "passive",

|

| 115 |

+

"log_level_replica": "warning",

|

| 116 |

+

"log_on_each_node": true,

|

| 117 |

+

"logging_dir": "/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424/runs",

|

| 118 |

+

"logging_strategy": "steps",

|

| 119 |

+

"logging_first_step": true,

|

| 120 |

+

"logging_steps": 5,

|

| 121 |

+

"logging_nan_inf_filter": true,

|

| 122 |

+

"save_strategy": "steps",

|

| 123 |

+

"save_steps": 500,

|

| 124 |

+

"save_total_limit": null,

|

| 125 |

+

"save_safetensors": true,

|

| 126 |

+

"save_on_each_node": false,

|

| 127 |

+

"save_only_model": false,

|

| 128 |

+

"restore_callback_states_from_checkpoint": false,

|

| 129 |

+

"no_cuda": false,

|

| 130 |

+

"use_cpu": false,

|

| 131 |

+

"use_mps_device": false,

|

| 132 |

+

"jit_mode_eval": false,

|

| 133 |

+

"use_ipex": false,

|

| 134 |

+

"bf16": true,

|

| 135 |

+

"fp16": false,

|

| 136 |

+

"fp16_opt_level": "O1",

|

| 137 |

+

"half_precision_backend": "auto",

|

| 138 |

+

"bf16_full_eval": false,

|

| 139 |

+

"fp16_full_eval": false,

|

| 140 |

+

"tf32": null,

|

| 141 |

+

"local_rank": -1,

|

| 142 |

+

"ddp_backend": null,

|

| 143 |

+

"tpu_num_cores": null,

|

| 144 |

+

"tpu_metrics_debug": false,

|

| 145 |

+

"debug": null,

|

| 146 |

+

"dataloader_drop_last": false,

|

| 147 |

+

"eval_steps": 500,

|

| 148 |

+

"dataloader_num_workers": null,

|

| 149 |

+

"dataloader_prefetch_factor": null,

|

| 150 |

+

"past_index": -1,

|

| 151 |

+

"run_name": "/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424",

|

| 152 |

+

"disable_tqdm": null,

|

| 153 |

+

"label_names": null,

|

| 154 |

+

"load_best_model_at_end": false,

|

| 155 |

+

"metric_for_best_model": "loss",

|

| 156 |

+

"greater_is_better": false,

|

| 157 |

+

"ignore_data_skip": false,

|

| 158 |

+

"fsdp": "",

|

| 159 |

+

"fsdp_min_num_params": 0,

|

| 160 |

+

"fsdp_config": null,

|

| 161 |

+

"fsdp_transformer_layer_cls_to_wrap": null,

|

| 162 |

+

"accelerator_config": {

|

| 163 |

+

"dispatch_batches": false

|

| 164 |

+

},

|

| 165 |

+

"deepspeed": null,

|

| 166 |

+

"label_smoothing_factor": 0.0,

|

| 167 |

+

"optim": "adamw_torch",

|

| 168 |

+

"optim_args": null,

|

| 169 |

+

"adafactor": false,

|

| 170 |

+

"group_by_length": false,

|

| 171 |

+

"length_column_name": "length",

|

| 172 |

+

"report_to": [

|

| 173 |

+

"tensorboard"

|

| 174 |

+

],

|

| 175 |

+

"ddp_find_unused_parameters": null,

|

| 176 |

+

"ddp_bucket_cap_mb": null,

|

| 177 |

+

"ddp_broadcast_buffers": null,

|

| 178 |

+

"dataloader_pin_memory": true,

|

| 179 |

+

"dataloader_persistent_workers": false,

|

| 180 |

+

"skip_memory_metrics": true,

|

| 181 |

+

"use_legacy_prediction_loop": false,

|

| 182 |

+

"push_to_hub": false,

|

| 183 |

+

"resume_from_checkpoint": null,

|

| 184 |

+

"hub_model_id": null,

|

| 185 |

+

"hub_strategy": "every_save",

|

| 186 |

+

"hub_private_repo": null,

|

| 187 |

+

"hub_always_push": false,

|

| 188 |

+

"gradient_checkpointing": true,

|

| 189 |

+

"gradient_checkpointing_kwargs": null,

|

| 190 |

+

"include_inputs_for_metrics": false,

|

| 191 |

+

"include_for_metrics": [],

|

| 192 |

+

"eval_do_concat_batches": true,

|

| 193 |

+

"fp16_backend": "auto",

|

| 194 |

+

"push_to_hub_model_id": null,

|

| 195 |

+

"push_to_hub_organization": null,

|

| 196 |

+

"push_to_hub_token": null,

|

| 197 |

+

"mp_parameters": "",

|

| 198 |

+

"auto_find_batch_size": false,

|

| 199 |

+

"full_determinism": false,

|

| 200 |

+

"torchdynamo": null,

|

| 201 |

+

"ray_scope": "last",

|

| 202 |

+

"ddp_timeout": 1800,

|

| 203 |

+

"torch_compile": false,

|

| 204 |

+

"torch_compile_backend": null,

|

| 205 |

+

"torch_compile_mode": null,

|

| 206 |

+

"include_tokens_per_second": false,

|

| 207 |

+

"include_num_input_tokens_seen": false,

|

| 208 |

+

"neftune_noise_alpha": null,

|

| 209 |

+

"optim_target_modules": null,

|

| 210 |

+

"batch_eval_metrics": false,

|

| 211 |

+

"eval_on_start": false,

|

| 212 |

+

"use_liger_kernel": false,

|

| 213 |

+

"eval_use_gather_object": false,

|

| 214 |

+

"average_tokens_across_devices": false,

|

| 215 |

+

"sortish_sampler": false,

|

| 216 |

+

"predict_with_generate": false,

|

| 217 |

+

"generation_max_length": null,

|

| 218 |

+

"generation_num_beams": null,

|

| 219 |

+

"generation_config": null,

|

| 220 |

+

"check_model": true,

|

| 221 |

+

"acc_strategy": "token",

|

| 222 |

+

"train_dataloader_shuffle": true,

|

| 223 |

+

"max_epochs": null,

|

| 224 |

+

"metric_warmup_step": 0,

|

| 225 |

+

"fsdp_num": 1,

|

| 226 |

+

"acc_steps": 1,

|

| 227 |

+

"eval_use_evalscope": false,

|

| 228 |

+

"eval_datasets": [],

|

| 229 |

+

"eval_limit": null,

|

| 230 |

+

"eval_datasets_args": null,

|

| 231 |

+

"eval_generation_config": null,

|

| 232 |

+

"freeze_parameters": [

|

| 233 |

+

"thinker.audio_tower",

|

| 234 |

+

"thinker.visual",

|

| 235 |

+

"thinker.audio_tower.proj",

|

| 236 |

+

"thinker.visual.merger",

|

| 237 |

+

"talker",

|

| 238 |

+

"token2wav"

|

| 239 |

+

],

|

| 240 |

+

"freeze_parameters_regex": null,

|

| 241 |

+

"freeze_parameters_ratio": 0.0,

|

| 242 |

+

"trainable_parameters": [],

|

| 243 |

+

"trainable_parameters_regex": null,

|

| 244 |

+

"freeze_llm": false,

|

| 245 |

+

"freeze_vit": true,

|

| 246 |

+

"freeze_aligner": true,

|

| 247 |

+

"target_modules": [

|

| 248 |

+

"all-linear"

|

| 249 |

+

],

|

| 250 |

+

"target_regex": null,

|

| 251 |

+

"modules_to_save": [],

|

| 252 |

+

"lora_rank": 8,

|

| 253 |

+

"lora_alpha": 32,

|

| 254 |

+

"lora_dropout": 0.05,

|

| 255 |

+

"lora_bias": "none",

|

| 256 |

+

"lora_dtype": null,

|

| 257 |

+

"lorap_lr_ratio": null,

|

| 258 |

+

"use_rslora": false,

|

| 259 |

+

"use_dora": false,

|

| 260 |

+

"lora_ga_batch_size": 2,

|

| 261 |

+

"lora_ga_iters": 2,

|

| 262 |

+

"lora_ga_max_length": 1024,

|

| 263 |

+

"lora_ga_direction": "ArB2r",

|

| 264 |

+

"lora_ga_scale": "stable",

|

| 265 |

+

"lora_ga_stable_gamma": 16,

|

| 266 |

+

"init_weights": true,

|

| 267 |

+

"fourier_n_frequency": 2000,

|

| 268 |

+

"fourier_scaling": 300.0,

|

| 269 |

+

"boft_block_size": 4,

|

| 270 |

+

"boft_block_num": 0,

|

| 271 |

+

"boft_n_butterfly_factor": 1,

|

| 272 |

+

"boft_dropout": 0.0,

|

| 273 |

+

"vera_rank": 256,

|

| 274 |

+

"vera_projection_prng_key": 0,

|

| 275 |

+

"vera_dropout": 0.0,

|

| 276 |

+

"vera_d_initial": 0.1,

|

| 277 |

+

"adapter_act": "gelu",

|

| 278 |

+

"adapter_length": 128,

|

| 279 |

+

"use_galore": false,

|

| 280 |

+

"galore_target_modules": null,

|

| 281 |

+

"galore_rank": 128,

|

| 282 |

+

"galore_update_proj_gap": 50,

|

| 283 |

+

"galore_scale": 1.0,

|

| 284 |

+

"galore_proj_type": "std",

|

| 285 |

+

"galore_optim_per_parameter": false,

|

| 286 |

+

"galore_with_embedding": false,

|

| 287 |

+

"galore_quantization": false,

|

| 288 |

+

"galore_proj_quant": false,

|

| 289 |

+

"galore_proj_bits": 4,

|

| 290 |

+

"galore_proj_group_size": 256,

|

| 291 |

+

"galore_cos_threshold": 0.4,

|

| 292 |

+

"galore_gamma_proj": 2,

|

| 293 |

+

"galore_queue_size": 5,

|

| 294 |

+

"adalora_target_r": 8,

|

| 295 |

+

"adalora_init_r": 12,

|

| 296 |

+

"adalora_tinit": 0,

|

| 297 |

+

"adalora_tfinal": 0,

|

| 298 |

+

"adalora_deltaT": 1,

|

| 299 |

+

"adalora_beta1": 0.85,

|

| 300 |

+

"adalora_beta2": 0.85,

|

| 301 |

+

"adalora_orth_reg_weight": 0.5,

|

| 302 |

+

"llamapro_num_new_blocks": 4,

|

| 303 |

+

"llamapro_num_groups": null,

|

| 304 |

+

"lisa_activated_layers": 0,

|

| 305 |

+

"lisa_step_interval": 20,

|

| 306 |

+

"reft_layer_key": null,

|

| 307 |

+

"reft_layers": null,

|

| 308 |

+

"reft_rank": 4,

|

| 309 |

+

"reft_intervention_type": "LoreftIntervention",

|

| 310 |

+

"reft_args": null,

|

| 311 |

+

"swanlab_token": null,

|

| 312 |

+

"swanlab_project": null,

|

| 313 |

+

"swanlab_workspace": null,

|

| 314 |

+

"swanlab_exp_name": null,

|

| 315 |

+

"swanlab_mode": "cloud",

|

| 316 |

+

"add_version": true,

|

| 317 |

+

"resume_only_model": false,

|

| 318 |

+

"create_checkpoint_symlink": false,

|

| 319 |

+

"packing": false,

|

| 320 |

+

"lazy_tokenize": true,

|

| 321 |

+

"loss_type": null,

|

| 322 |

+

"optimizer": null,

|

| 323 |

+

"metric": null,

|

| 324 |

+

"zero_hpz_partition_size": null,

|

| 325 |

+

"rank": -1,

|

| 326 |

+

"global_world_size": 1,

|

| 327 |

+

"local_world_size": 1,

|

| 328 |

+

"model_suffix": "Qwen2.5-Omni-3B",

|

| 329 |

+

"model_info": "ModelInfo(model_type='qwen2_5_omni', model_dir='/home/xj_data/jishengpeng/Code/Qwen2.5-Omni-3B', torch_dtype=torch.bfloat16, max_model_len=32768, quant_method=None, quant_bits=None, rope_scaling={'mrope_section': [16, 24, 24], 'rope_type': 'default', 'type': 'default'}, config=None, task_type='causal_lm', num_labels=None)",

|

| 330 |

+

"model_meta": "ModelMeta(model_type='qwen2_5_omni', model_groups=[ModelGroup(models=[Model(ms_model_id='Qwen/Qwen2.5-Omni-3B', hf_model_id='Qwen/Qwen2.5-Omni-3B', model_path=None, ms_revision=None, hf_revision=None), Model(ms_model_id='Qwen/Qwen2.5-Omni-7B', hf_model_id='Qwen/Qwen2.5-Omni-7B', model_path=None, ms_revision=None, hf_revision=None)], ignore_patterns=None, requires=None, tags=[])], template='qwen2_5_omni', get_function=<function get_model_tokenizer_qwen2_5_omni at 0x7f21a6587880>, model_arch='qwen2_5_omni', architectures=['Qwen2_5OmniModel'], additional_saved_files=['spk_dict.pt'], torch_dtype=None, is_multimodal=True, is_reward=False, task_type=None, ignore_patterns=['*.bin', '*.safetensors'], requires=['transformers>=4.50', 'soundfile', 'qwen_omni_utils', 'decord'], tags=[])",

|

| 331 |

+

"model_dir": "/home/xj_data/jishengpeng/Code/Qwen2.5-Omni-3B",

|

| 332 |

+

"hub": "<class 'swift.hub.hub.MSHub'>",

|

| 333 |

+

"evaluation_strategy": "steps",

|

| 334 |

+

"training_args": "Seq2SeqTrainingArguments(output_dir='/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424', overwrite_output_dir=False, do_train=False, do_eval=True, do_predict=False, eval_strategy=<IntervalStrategy.STEPS: 'steps'>, prediction_loss_only=False, per_device_train_batch_size=2, per_device_eval_batch_size=1, per_gpu_train_batch_size=None, per_gpu_eval_batch_size=None, gradient_accumulation_steps=8, eval_accumulation_steps=None, eval_delay=0, torch_empty_cache_steps=None, learning_rate=1e-05, weight_decay=0.1, adam_beta1=0.9, adam_beta2=0.95, adam_epsilon=1e-08, max_grad_norm=1.0, num_train_epochs=3.0, max_steps=-1, lr_scheduler_type=<SchedulerType.COSINE: 'cosine'>, lr_scheduler_kwargs=None, warmup_ratio=0.0, warmup_steps=0, log_level='passive', log_level_replica='warning', log_on_each_node=True, logging_dir='/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424/runs', logging_strategy=<IntervalStrategy.STEPS: 'steps'>, logging_first_step=True, logging_steps=5, logging_nan_inf_filter=True, save_strategy=<SaveStrategy.STEPS: 'steps'>, save_steps=500, save_total_limit=None, save_safetensors=True, save_on_each_node=False, save_only_model=False, restore_callback_states_from_checkpoint=False, no_cuda=False, use_cpu=False, use_mps_device=False, seed=42, data_seed=42, jit_mode_eval=False, use_ipex=False, bf16=True, fp16=False, fp16_opt_level='O1', half_precision_backend='auto', bf16_full_eval=False, fp16_full_eval=False, tf32=None, local_rank=0, ddp_backend=None, tpu_num_cores=None, tpu_metrics_debug=False, debug=[], dataloader_drop_last=False, eval_steps=500, dataloader_num_workers=1, dataloader_prefetch_factor=10, past_index=-1, run_name='/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424', disable_tqdm=False, remove_unused_columns=False, label_names=None, load_best_model_at_end=False, metric_for_best_model='loss', greater_is_better=False, ignore_data_skip=False, fsdp=[], fsdp_min_num_params=0, fsdp_config={'min_num_params': 0, 'xla': False, 'xla_fsdp_v2': False, 'xla_fsdp_grad_ckpt': False}, fsdp_transformer_layer_cls_to_wrap=None, accelerator_config=AcceleratorConfig(split_batches=False, dispatch_batches=False, even_batches=True, use_seedable_sampler=True, non_blocking=False, gradient_accumulation_kwargs=None, use_configured_state=False), deepspeed=None, label_smoothing_factor=0.0, optim=<OptimizerNames.ADAMW_TORCH: 'adamw_torch'>, optim_args=None, adafactor=False, group_by_length=False, length_column_name='length', report_to=['tensorboard'], ddp_find_unused_parameters=None, ddp_bucket_cap_mb=None, ddp_broadcast_buffers=None, dataloader_pin_memory=True, dataloader_persistent_workers=False, skip_memory_metrics=True, use_legacy_prediction_loop=False, push_to_hub=False, resume_from_checkpoint=None, hub_model_id=None, hub_strategy=<HubStrategy.EVERY_SAVE: 'every_save'>, hub_token=None, hub_private_repo=None, hub_always_push=False, gradient_checkpointing=True, gradient_checkpointing_kwargs=None, include_inputs_for_metrics=False, include_for_metrics=[], eval_do_concat_batches=True, fp16_backend='auto', push_to_hub_model_id=None, push_to_hub_organization=None, push_to_hub_token=None, mp_parameters='', auto_find_batch_size=False, full_determinism=False, torchdynamo=None, ray_scope='last', ddp_timeout=1800, torch_compile=False, torch_compile_backend=None, torch_compile_mode=None, include_tokens_per_second=None, include_num_input_tokens_seen=None, neftune_noise_alpha=None, optim_target_modules=None, batch_eval_metrics=False, eval_on_start=False, use_liger_kernel=False, eval_use_gather_object=False, average_tokens_across_devices=None, sortish_sampler=False, predict_with_generate=False, generation_max_length=None, generation_num_beams=None, generation_config=None, check_model=True, acc_strategy='token', train_dataloader_shuffle=True, max_epochs=None, metric_warmup_step=0, fsdp_num=1, acc_steps=1, eval_use_evalscope=False, eval_datasets=[], eval_limit=None, eval_datasets_args=None, eval_generation_config=None, train_type='full', optimizer=None, local_repo_path=None, galore_config=None)"

|

| 335 |

+

}

|

v0-20250513-030424/checkpoint-9/added_tokens.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</tool_call>": 151658,

|

| 3 |

+

"<tool_call>": 151657,

|

| 4 |

+

"<|AUDIO|>": 151646,

|

| 5 |

+

"<|IMAGE|>": 151655,

|

| 6 |

+

"<|VIDEO|>": 151656,

|

| 7 |

+

"<|audio_bos|>": 151647,

|

| 8 |

+

"<|audio_eos|>": 151648,

|

| 9 |

+

"<|box_end|>": 151649,

|

| 10 |

+

"<|endoftext|>": 151643,

|

| 11 |

+

"<|file_sep|>": 151664,

|

| 12 |

+

"<|fim_middle|>": 151660,

|

| 13 |

+

"<|fim_pad|>": 151662,

|

| 14 |

+

"<|fim_prefix|>": 151659,

|

| 15 |

+

"<|fim_suffix|>": 151661,

|

| 16 |

+

"<|im_end|>": 151645,

|

| 17 |

+

"<|im_start|>": 151644,

|

| 18 |

+

"<|quad_end|>": 151651,

|

| 19 |

+

"<|quad_start|>": 151650,

|

| 20 |

+

"<|repo_name|>": 151663,

|

| 21 |

+

"<|vision_bos|>": 151652,

|

| 22 |

+

"<|vision_eos|>": 151653,

|

| 23 |

+

"<|vision_pad|>": 151654

|

| 24 |

+

}

|

v0-20250513-030424/checkpoint-9/args.json

ADDED

|

@@ -0,0 +1,335 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"model": "/home/xj_data/jishengpeng/Code/Qwen2.5-Omni-3B",

|

| 3 |

+

"model_type": "qwen2_5_omni",

|

| 4 |

+

"model_revision": null,

|

| 5 |

+

"task_type": "causal_lm",

|

| 6 |

+

"torch_dtype": "bfloat16",

|

| 7 |

+

"attn_impl": null,

|

| 8 |

+

"num_labels": null,

|

| 9 |

+

"problem_type": null,

|

| 10 |

+

"rope_scaling": null,

|

| 11 |

+

"device_map": null,

|

| 12 |

+

"max_memory": {},

|

| 13 |

+

"local_repo_path": null,

|

| 14 |

+

"init_strategy": null,

|

| 15 |

+

"template": "qwen2_5_omni",

|

| 16 |

+

"system": null,

|

| 17 |

+

"max_length": null,

|

| 18 |

+

"truncation_strategy": "delete",

|

| 19 |

+

"max_pixels": null,

|

| 20 |

+

"agent_template": null,

|

| 21 |

+

"norm_bbox": null,

|

| 22 |

+

"response_prefix": null,

|

| 23 |

+

"padding_side": "right",

|

| 24 |

+

"loss_scale": "default",

|

| 25 |

+

"sequence_parallel_size": 1,

|

| 26 |

+

"use_chat_template": true,

|

| 27 |

+

"template_backend": "swift",

|

| 28 |

+

"dataset": [

|

| 29 |

+

"/home/xj_data/jishengpeng/InteractSpeech/ms-swift/dataset.json"

|

| 30 |

+

],

|

| 31 |

+

"val_dataset": [],

|

| 32 |

+

"split_dataset_ratio": 0.01,

|

| 33 |

+

"data_seed": 42,

|

| 34 |

+

"dataset_num_proc": 1,

|

| 35 |

+

"load_from_cache_file": false,

|

| 36 |

+

"dataset_shuffle": true,

|

| 37 |

+

"val_dataset_shuffle": false,

|

| 38 |

+

"streaming": false,

|

| 39 |

+

"interleave_prob": null,

|

| 40 |

+

"stopping_strategy": "first_exhausted",

|

| 41 |

+

"shuffle_buffer_size": 1000,

|

| 42 |

+

"download_mode": "reuse_dataset_if_exists",

|

| 43 |

+

"columns": {},

|

| 44 |

+

"strict": false,

|

| 45 |

+

"remove_unused_columns": true,

|

| 46 |

+

"model_name": [

|

| 47 |

+

null,

|

| 48 |

+

null

|

| 49 |

+

],

|

| 50 |

+

"model_author": [

|

| 51 |

+

null,

|

| 52 |

+

null

|

| 53 |

+

],

|

| 54 |

+

"custom_dataset_info": [],

|

| 55 |

+

"quant_method": null,

|

| 56 |

+

"quant_bits": null,

|

| 57 |

+

"hqq_axis": null,

|

| 58 |

+

"bnb_4bit_compute_dtype": "bfloat16",

|

| 59 |

+

"bnb_4bit_quant_type": "nf4",

|

| 60 |

+

"bnb_4bit_use_double_quant": true,

|

| 61 |

+

"bnb_4bit_quant_storage": null,

|

| 62 |

+

"max_new_tokens": 64,

|

| 63 |

+

"temperature": 0.0,

|

| 64 |

+

"top_k": null,

|

| 65 |

+

"top_p": null,

|

| 66 |

+

"repetition_penalty": null,

|

| 67 |

+

"num_beams": 1,

|

| 68 |

+

"stream": false,

|

| 69 |

+

"stop_words": [],

|

| 70 |

+

"logprobs": false,

|

| 71 |

+

"top_logprobs": null,

|

| 72 |

+

"ckpt_dir": null,

|

| 73 |

+

"lora_modules": [],

|

| 74 |

+

"tuner_backend": "peft",

|

| 75 |

+

"train_type": "full",

|

| 76 |

+

"adapters": [],

|

| 77 |

+

"external_plugins": [],

|

| 78 |

+

"seed": 42,

|

| 79 |

+

"model_kwargs": {},

|

| 80 |

+

"load_args": false,

|

| 81 |

+

"load_data_args": false,

|

| 82 |

+

"use_hf": false,

|

| 83 |

+

"hub_token": null,

|

| 84 |

+

"custom_register_path": [],

|

| 85 |

+

"ignore_args_error": false,

|

| 86 |

+

"use_swift_lora": false,

|

| 87 |

+

"output_dir": "/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424",

|

| 88 |

+

"overwrite_output_dir": false,

|

| 89 |

+

"do_train": false,

|

| 90 |

+

"do_eval": false,

|

| 91 |

+

"do_predict": false,

|

| 92 |

+

"eval_strategy": "steps",

|

| 93 |

+

"prediction_loss_only": false,

|

| 94 |

+

"per_device_train_batch_size": 2,

|

| 95 |

+

"per_device_eval_batch_size": 1,

|

| 96 |

+

"per_gpu_train_batch_size": null,

|

| 97 |

+

"per_gpu_eval_batch_size": null,

|

| 98 |

+

"gradient_accumulation_steps": null,

|

| 99 |

+

"eval_accumulation_steps": null,

|

| 100 |

+

"eval_delay": 0,

|

| 101 |

+

"torch_empty_cache_steps": null,

|

| 102 |

+

"learning_rate": 1e-05,

|

| 103 |

+

"weight_decay": 0.1,

|

| 104 |

+

"adam_beta1": 0.9,

|

| 105 |

+

"adam_beta2": 0.95,

|

| 106 |

+

"adam_epsilon": 1e-08,

|

| 107 |

+

"max_grad_norm": 1.0,

|

| 108 |

+

"num_train_epochs": 3.0,

|

| 109 |

+

"max_steps": -1,

|

| 110 |

+

"lr_scheduler_type": "cosine",

|

| 111 |

+

"lr_scheduler_kwargs": null,

|

| 112 |

+

"warmup_ratio": 0.0,

|

| 113 |

+

"warmup_steps": 0,

|

| 114 |

+

"log_level": "passive",

|

| 115 |

+

"log_level_replica": "warning",

|

| 116 |

+

"log_on_each_node": true,

|

| 117 |

+

"logging_dir": "/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424/runs",

|

| 118 |

+

"logging_strategy": "steps",

|

| 119 |

+

"logging_first_step": true,

|

| 120 |

+

"logging_steps": 5,

|

| 121 |

+

"logging_nan_inf_filter": true,

|

| 122 |

+

"save_strategy": "steps",

|

| 123 |

+

"save_steps": 500,

|

| 124 |

+

"save_total_limit": null,

|

| 125 |

+

"save_safetensors": true,

|

| 126 |

+

"save_on_each_node": false,

|

| 127 |

+

"save_only_model": false,

|

| 128 |

+

"restore_callback_states_from_checkpoint": false,

|

| 129 |

+

"no_cuda": false,

|

| 130 |

+

"use_cpu": false,

|

| 131 |

+

"use_mps_device": false,

|

| 132 |

+

"jit_mode_eval": false,

|

| 133 |

+

"use_ipex": false,

|

| 134 |

+

"bf16": true,

|

| 135 |

+

"fp16": false,

|

| 136 |

+

"fp16_opt_level": "O1",

|

| 137 |

+

"half_precision_backend": "auto",

|

| 138 |

+

"bf16_full_eval": false,

|

| 139 |

+

"fp16_full_eval": false,

|

| 140 |

+

"tf32": null,

|

| 141 |

+

"local_rank": -1,

|

| 142 |

+

"ddp_backend": null,

|

| 143 |

+

"tpu_num_cores": null,

|

| 144 |

+

"tpu_metrics_debug": false,

|

| 145 |

+

"debug": null,

|

| 146 |

+

"dataloader_drop_last": false,

|

| 147 |

+

"eval_steps": 500,

|

| 148 |

+

"dataloader_num_workers": null,

|

| 149 |

+

"dataloader_prefetch_factor": null,

|

| 150 |

+

"past_index": -1,

|

| 151 |

+

"run_name": "/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424",

|

| 152 |

+

"disable_tqdm": null,

|

| 153 |

+

"label_names": null,

|

| 154 |

+

"load_best_model_at_end": false,

|

| 155 |

+

"metric_for_best_model": "loss",

|

| 156 |

+

"greater_is_better": false,

|

| 157 |

+

"ignore_data_skip": false,

|

| 158 |

+

"fsdp": "",

|

| 159 |

+

"fsdp_min_num_params": 0,

|

| 160 |

+

"fsdp_config": null,

|

| 161 |

+

"fsdp_transformer_layer_cls_to_wrap": null,

|

| 162 |

+

"accelerator_config": {

|

| 163 |

+

"dispatch_batches": false

|

| 164 |

+

},

|

| 165 |

+

"deepspeed": null,

|

| 166 |

+

"label_smoothing_factor": 0.0,

|

| 167 |

+

"optim": "adamw_torch",

|

| 168 |

+

"optim_args": null,

|

| 169 |

+

"adafactor": false,

|

| 170 |

+

"group_by_length": false,

|

| 171 |

+

"length_column_name": "length",

|

| 172 |

+

"report_to": [

|

| 173 |

+

"tensorboard"

|

| 174 |

+

],

|

| 175 |

+

"ddp_find_unused_parameters": null,

|

| 176 |

+

"ddp_bucket_cap_mb": null,

|

| 177 |

+

"ddp_broadcast_buffers": null,

|

| 178 |

+

"dataloader_pin_memory": true,

|

| 179 |

+

"dataloader_persistent_workers": false,

|

| 180 |

+

"skip_memory_metrics": true,

|

| 181 |

+

"use_legacy_prediction_loop": false,

|

| 182 |

+

"push_to_hub": false,

|

| 183 |

+

"resume_from_checkpoint": null,

|

| 184 |

+

"hub_model_id": null,

|

| 185 |

+

"hub_strategy": "every_save",

|

| 186 |

+

"hub_private_repo": null,

|

| 187 |

+

"hub_always_push": false,

|

| 188 |

+

"gradient_checkpointing": true,

|

| 189 |

+

"gradient_checkpointing_kwargs": null,

|

| 190 |

+

"include_inputs_for_metrics": false,

|

| 191 |

+

"include_for_metrics": [],

|

| 192 |

+

"eval_do_concat_batches": true,

|

| 193 |

+

"fp16_backend": "auto",

|

| 194 |

+

"push_to_hub_model_id": null,

|

| 195 |

+

"push_to_hub_organization": null,

|

| 196 |

+

"push_to_hub_token": null,

|

| 197 |

+

"mp_parameters": "",

|

| 198 |

+

"auto_find_batch_size": false,

|

| 199 |

+

"full_determinism": false,

|

| 200 |

+

"torchdynamo": null,

|

| 201 |

+

"ray_scope": "last",

|

| 202 |

+

"ddp_timeout": 1800,

|

| 203 |

+

"torch_compile": false,

|

| 204 |

+

"torch_compile_backend": null,

|

| 205 |

+

"torch_compile_mode": null,

|

| 206 |

+

"include_tokens_per_second": false,

|

| 207 |

+

"include_num_input_tokens_seen": false,

|

| 208 |

+

"neftune_noise_alpha": null,

|

| 209 |

+

"optim_target_modules": null,

|

| 210 |

+

"batch_eval_metrics": false,

|

| 211 |

+

"eval_on_start": false,

|

| 212 |

+

"use_liger_kernel": false,

|

| 213 |

+

"eval_use_gather_object": false,

|

| 214 |

+

"average_tokens_across_devices": false,

|

| 215 |

+

"sortish_sampler": false,

|

| 216 |

+

"predict_with_generate": false,

|

| 217 |

+

"generation_max_length": null,

|

| 218 |

+

"generation_num_beams": null,

|

| 219 |

+

"generation_config": null,

|

| 220 |

+

"check_model": true,

|

| 221 |

+

"acc_strategy": "token",

|

| 222 |

+

"train_dataloader_shuffle": true,

|

| 223 |

+

"max_epochs": null,

|

| 224 |

+

"metric_warmup_step": 0,

|

| 225 |

+

"fsdp_num": 1,

|

| 226 |

+

"acc_steps": 1,

|

| 227 |

+

"eval_use_evalscope": false,

|

| 228 |

+

"eval_datasets": [],

|

| 229 |

+

"eval_limit": null,

|

| 230 |

+

"eval_datasets_args": null,

|

| 231 |

+

"eval_generation_config": null,

|

| 232 |

+

"freeze_parameters": [

|

| 233 |

+

"thinker.audio_tower",

|

| 234 |

+

"thinker.visual",

|

| 235 |

+

"thinker.audio_tower.proj",

|

| 236 |

+

"thinker.visual.merger",

|

| 237 |

+

"talker",

|

| 238 |

+

"token2wav"

|

| 239 |

+

],

|

| 240 |

+

"freeze_parameters_regex": null,

|

| 241 |

+

"freeze_parameters_ratio": 0.0,

|

| 242 |

+

"trainable_parameters": [],

|

| 243 |

+

"trainable_parameters_regex": null,

|

| 244 |

+

"freeze_llm": false,

|

| 245 |

+

"freeze_vit": true,

|

| 246 |

+

"freeze_aligner": true,

|

| 247 |

+

"target_modules": [

|

| 248 |

+

"all-linear"

|

| 249 |

+

],

|

| 250 |

+

"target_regex": null,

|

| 251 |

+

"modules_to_save": [],

|

| 252 |

+

"lora_rank": 8,

|

| 253 |

+

"lora_alpha": 32,

|

| 254 |

+

"lora_dropout": 0.05,

|

| 255 |

+

"lora_bias": "none",

|

| 256 |

+

"lora_dtype": null,

|

| 257 |

+

"lorap_lr_ratio": null,

|

| 258 |

+

"use_rslora": false,

|

| 259 |

+

"use_dora": false,

|

| 260 |

+

"lora_ga_batch_size": 2,

|

| 261 |

+

"lora_ga_iters": 2,

|

| 262 |

+

"lora_ga_max_length": 1024,

|

| 263 |

+

"lora_ga_direction": "ArB2r",

|

| 264 |

+

"lora_ga_scale": "stable",

|

| 265 |

+

"lora_ga_stable_gamma": 16,

|

| 266 |

+

"init_weights": true,

|

| 267 |

+

"fourier_n_frequency": 2000,

|

| 268 |

+

"fourier_scaling": 300.0,

|

| 269 |

+

"boft_block_size": 4,

|

| 270 |

+

"boft_block_num": 0,

|

| 271 |

+

"boft_n_butterfly_factor": 1,

|

| 272 |

+

"boft_dropout": 0.0,

|

| 273 |

+

"vera_rank": 256,

|

| 274 |

+

"vera_projection_prng_key": 0,

|

| 275 |

+

"vera_dropout": 0.0,

|

| 276 |

+

"vera_d_initial": 0.1,

|

| 277 |

+

"adapter_act": "gelu",

|

| 278 |

+

"adapter_length": 128,

|

| 279 |

+

"use_galore": false,

|

| 280 |

+

"galore_target_modules": null,

|

| 281 |

+

"galore_rank": 128,

|

| 282 |

+

"galore_update_proj_gap": 50,

|

| 283 |

+

"galore_scale": 1.0,

|

| 284 |

+

"galore_proj_type": "std",

|

| 285 |

+

"galore_optim_per_parameter": false,

|

| 286 |

+

"galore_with_embedding": false,

|

| 287 |

+

"galore_quantization": false,

|

| 288 |

+

"galore_proj_quant": false,

|

| 289 |

+

"galore_proj_bits": 4,

|

| 290 |

+

"galore_proj_group_size": 256,

|

| 291 |

+

"galore_cos_threshold": 0.4,

|

| 292 |

+

"galore_gamma_proj": 2,

|

| 293 |

+

"galore_queue_size": 5,

|

| 294 |

+

"adalora_target_r": 8,

|

| 295 |

+

"adalora_init_r": 12,

|

| 296 |

+

"adalora_tinit": 0,

|

| 297 |

+

"adalora_tfinal": 0,

|

| 298 |

+

"adalora_deltaT": 1,

|

| 299 |

+

"adalora_beta1": 0.85,

|

| 300 |

+

"adalora_beta2": 0.85,

|

| 301 |

+

"adalora_orth_reg_weight": 0.5,

|

| 302 |

+

"llamapro_num_new_blocks": 4,

|

| 303 |

+

"llamapro_num_groups": null,

|

| 304 |

+

"lisa_activated_layers": 0,

|

| 305 |

+

"lisa_step_interval": 20,

|

| 306 |

+

"reft_layer_key": null,

|

| 307 |

+

"reft_layers": null,

|

| 308 |

+

"reft_rank": 4,

|

| 309 |

+

"reft_intervention_type": "LoreftIntervention",

|

| 310 |

+

"reft_args": null,

|

| 311 |

+

"swanlab_token": null,

|

| 312 |

+

"swanlab_project": null,

|

| 313 |

+

"swanlab_workspace": null,

|

| 314 |

+

"swanlab_exp_name": null,

|

| 315 |

+

"swanlab_mode": "cloud",

|

| 316 |

+

"add_version": true,

|

| 317 |

+

"resume_only_model": false,

|

| 318 |

+

"create_checkpoint_symlink": false,

|

| 319 |

+

"packing": false,

|

| 320 |

+

"lazy_tokenize": true,

|

| 321 |

+

"loss_type": null,

|

| 322 |

+

"optimizer": null,

|

| 323 |

+

"metric": null,

|

| 324 |

+

"zero_hpz_partition_size": null,

|

| 325 |

+

"rank": -1,

|

| 326 |

+

"global_world_size": 1,

|

| 327 |

+

"local_world_size": 1,

|

| 328 |

+

"model_suffix": "Qwen2.5-Omni-3B",

|

| 329 |

+

"model_info": "ModelInfo(model_type='qwen2_5_omni', model_dir='/home/xj_data/jishengpeng/Code/Qwen2.5-Omni-3B', torch_dtype=torch.bfloat16, max_model_len=32768, quant_method=None, quant_bits=None, rope_scaling={'mrope_section': [16, 24, 24], 'rope_type': 'default', 'type': 'default'}, config=None, task_type='causal_lm', num_labels=None)",

|

| 330 |

+

"model_meta": "ModelMeta(model_type='qwen2_5_omni', model_groups=[ModelGroup(models=[Model(ms_model_id='Qwen/Qwen2.5-Omni-3B', hf_model_id='Qwen/Qwen2.5-Omni-3B', model_path=None, ms_revision=None, hf_revision=None), Model(ms_model_id='Qwen/Qwen2.5-Omni-7B', hf_model_id='Qwen/Qwen2.5-Omni-7B', model_path=None, ms_revision=None, hf_revision=None)], ignore_patterns=None, requires=None, tags=[])], template='qwen2_5_omni', get_function=<function get_model_tokenizer_qwen2_5_omni at 0x7f21a6587880>, model_arch='qwen2_5_omni', architectures=['Qwen2_5OmniModel'], additional_saved_files=['spk_dict.pt'], torch_dtype=None, is_multimodal=True, is_reward=False, task_type=None, ignore_patterns=['*.bin', '*.safetensors'], requires=['transformers>=4.50', 'soundfile', 'qwen_omni_utils', 'decord'], tags=[])",

|

| 331 |

+

"model_dir": "/home/xj_data/jishengpeng/Code/Qwen2.5-Omni-3B",

|

| 332 |

+

"hub": "<class 'swift.hub.hub.MSHub'>",

|

| 333 |

+

"evaluation_strategy": "steps",

|

| 334 |

+

"training_args": "Seq2SeqTrainingArguments(output_dir='/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424', overwrite_output_dir=False, do_train=False, do_eval=True, do_predict=False, eval_strategy=<IntervalStrategy.STEPS: 'steps'>, prediction_loss_only=False, per_device_train_batch_size=2, per_device_eval_batch_size=1, per_gpu_train_batch_size=None, per_gpu_eval_batch_size=None, gradient_accumulation_steps=8, eval_accumulation_steps=None, eval_delay=0, torch_empty_cache_steps=None, learning_rate=1e-05, weight_decay=0.1, adam_beta1=0.9, adam_beta2=0.95, adam_epsilon=1e-08, max_grad_norm=1.0, num_train_epochs=3.0, max_steps=-1, lr_scheduler_type=<SchedulerType.COSINE: 'cosine'>, lr_scheduler_kwargs=None, warmup_ratio=0.0, warmup_steps=0, log_level='passive', log_level_replica='warning', log_on_each_node=True, logging_dir='/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424/runs', logging_strategy=<IntervalStrategy.STEPS: 'steps'>, logging_first_step=True, logging_steps=5, logging_nan_inf_filter=True, save_strategy=<SaveStrategy.STEPS: 'steps'>, save_steps=500, save_total_limit=None, save_safetensors=True, save_on_each_node=False, save_only_model=False, restore_callback_states_from_checkpoint=False, no_cuda=False, use_cpu=False, use_mps_device=False, seed=42, data_seed=42, jit_mode_eval=False, use_ipex=False, bf16=True, fp16=False, fp16_opt_level='O1', half_precision_backend='auto', bf16_full_eval=False, fp16_full_eval=False, tf32=None, local_rank=0, ddp_backend=None, tpu_num_cores=None, tpu_metrics_debug=False, debug=[], dataloader_drop_last=False, eval_steps=500, dataloader_num_workers=1, dataloader_prefetch_factor=10, past_index=-1, run_name='/home/xj_data/jishengpeng/InteractSpeech/ms-swift/result/output_3B_fulltune_interact/v0-20250513-030424', disable_tqdm=False, remove_unused_columns=False, label_names=None, load_best_model_at_end=False, metric_for_best_model='loss', greater_is_better=False, ignore_data_skip=False, fsdp=[], fsdp_min_num_params=0, fsdp_config={'min_num_params': 0, 'xla': False, 'xla_fsdp_v2': False, 'xla_fsdp_grad_ckpt': False}, fsdp_transformer_layer_cls_to_wrap=None, accelerator_config=AcceleratorConfig(split_batches=False, dispatch_batches=False, even_batches=True, use_seedable_sampler=True, non_blocking=False, gradient_accumulation_kwargs=None, use_configured_state=False), deepspeed=None, label_smoothing_factor=0.0, optim=<OptimizerNames.ADAMW_TORCH: 'adamw_torch'>, optim_args=None, adafactor=False, group_by_length=False, length_column_name='length', report_to=['tensorboard'], ddp_find_unused_parameters=None, ddp_bucket_cap_mb=None, ddp_broadcast_buffers=None, dataloader_pin_memory=True, dataloader_persistent_workers=False, skip_memory_metrics=True, use_legacy_prediction_loop=False, push_to_hub=False, resume_from_checkpoint=None, hub_model_id=None, hub_strategy=<HubStrategy.EVERY_SAVE: 'every_save'>, hub_token=None, hub_private_repo=None, hub_always_push=False, gradient_checkpointing=True, gradient_checkpointing_kwargs=None, include_inputs_for_metrics=False, include_for_metrics=[], eval_do_concat_batches=True, fp16_backend='auto', push_to_hub_model_id=None, push_to_hub_organization=None, push_to_hub_token=None, mp_parameters='', auto_find_batch_size=False, full_determinism=False, torchdynamo=None, ray_scope='last', ddp_timeout=1800, torch_compile=False, torch_compile_backend=None, torch_compile_mode=None, include_tokens_per_second=None, include_num_input_tokens_seen=None, neftune_noise_alpha=None, optim_target_modules=None, batch_eval_metrics=False, eval_on_start=False, use_liger_kernel=False, eval_use_gather_object=False, average_tokens_across_devices=None, sortish_sampler=False, predict_with_generate=False, generation_max_length=None, generation_num_beams=None, generation_config=None, check_model=True, acc_strategy='token', train_dataloader_shuffle=True, max_epochs=None, metric_warmup_step=0, fsdp_num=1, acc_steps=1, eval_use_evalscope=False, eval_datasets=[], eval_limit=None, eval_datasets_args=None, eval_generation_config=None, train_type='full', optimizer=None, local_repo_path=None, galore_config=None)"

|

| 335 |

+

}

|

v0-20250513-030424/checkpoint-9/chat_template.jinja

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{% set audio_count = namespace(value=0) %}{% set image_count = namespace(value=0) %}{% set video_count = namespace(value=0) %}{% for message in messages %}{% if loop.first and message['role'] != 'system' %}<|im_start|>system

|

| 2 |

+

You are a helpful assistant.<|im_end|>

|

| 3 |

+

{% endif %}<|im_start|>{{ message['role'] }}

|

| 4 |

+

{% if message['content'] is string %}{{ message['content'] }}<|im_end|>

|

| 5 |

+

{% else %}{% for content in message['content'] %}{% if content['type'] == 'image' or 'image' in content or 'image_url' in content %}{% set image_count.value = image_count.value + 1 %}{% if add_vision_id %}Picture {{ image_count.value }}: {% endif %}<|vision_bos|><|IMAGE|><|vision_eos|>{% elif content['type'] == 'audio' or 'audio' in content or 'audio_url' in content %}{% set audio_count.value = audio_count.value + 1 %}{% if add_audio_id %}Audio {{ audio_count.value }}: {% endif %}<|audio_bos|><|AUDIO|><|audio_eos|>{% elif content['type'] == 'video' or 'video' in content %}{% set video_count.value = video_count.value + 1 %}{% if add_vision_id %}Video {{ video_count.value }}: {% endif %}<|vision_bos|><|VIDEO|><|vision_eos|>{% elif 'text' in content %}{{ content['text'] }}{% endif %}{% endfor %}<|im_end|>

|

| 6 |

+

{% endif %}{% endfor %}{% if add_generation_prompt %}<|im_start|>assistant

|

| 7 |

+

{% endif %}

|

v0-20250513-030424/checkpoint-9/config.json

ADDED

|

@@ -0,0 +1,574 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|